Strengthening Browser Agent Capabilities with Advanced security Protocols

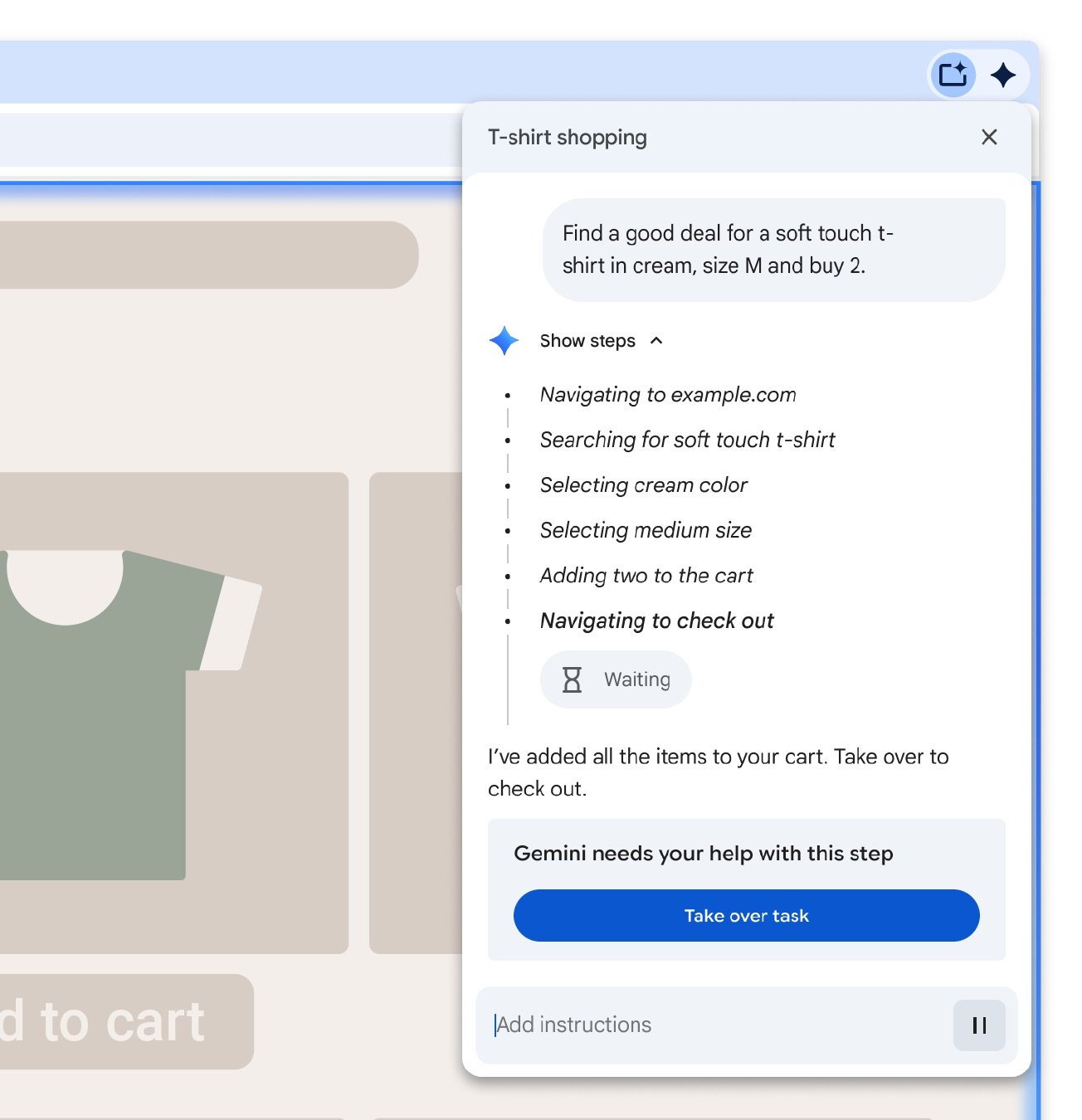

Today’s web browsers are evolving to include autonomous agent features that can execute tasks like making purchases or scheduling appointments on behalf of users. While these smart agents enhance user convenience, they also bring significant security risks, such as data exposure and financial fraud.

A Layered AI Framework for Secure agent Operations in Chrome

To mitigate these vulnerabilities, Google has implemented a multi-tiered AI system within Chrome that oversees agent activities. At the core lies the User Alignment Critic powered by Gemini, which scrutinizes action plans created by the planner model. If an intended operation conflicts with user goals, this critic triggers a reevaluation without accessing sensitive webpage content-only metadata is analyzed to preserve privacy.

Limiting Data Exposure via Origin Sets

The concept of Agent Origin Sets plays a crucial role in restricting agents’ access to specific website domains categorized as either read-only or read-writeable. For exmaple, when interacting with an online bookstore, agents can retrieve book listings necessary for completing tasks but are blocked from unrelated page elements like advertisements or pop-ups. Furthermore, interaction is confined to designated iframes on pages to reduce unnecessary data exposure.

This compartmentalization effectively prevents cross-origin data leaks by ensuring only authorized sources provide input into writable areas within the browser environment. Such strict boundaries enable Chrome’s AI models to filter out irrelevant or possibly harmful information before processing.

User oversight and Consent Mechanisms for Sensitive Actions

An additional monitoring model supervises URL navigation requests initiated by agents and automatically blocks redirects toward suspicious or malicious websites. This safeguard helps thwart exploitation attempts through deceptive links during automated browsing sessions.

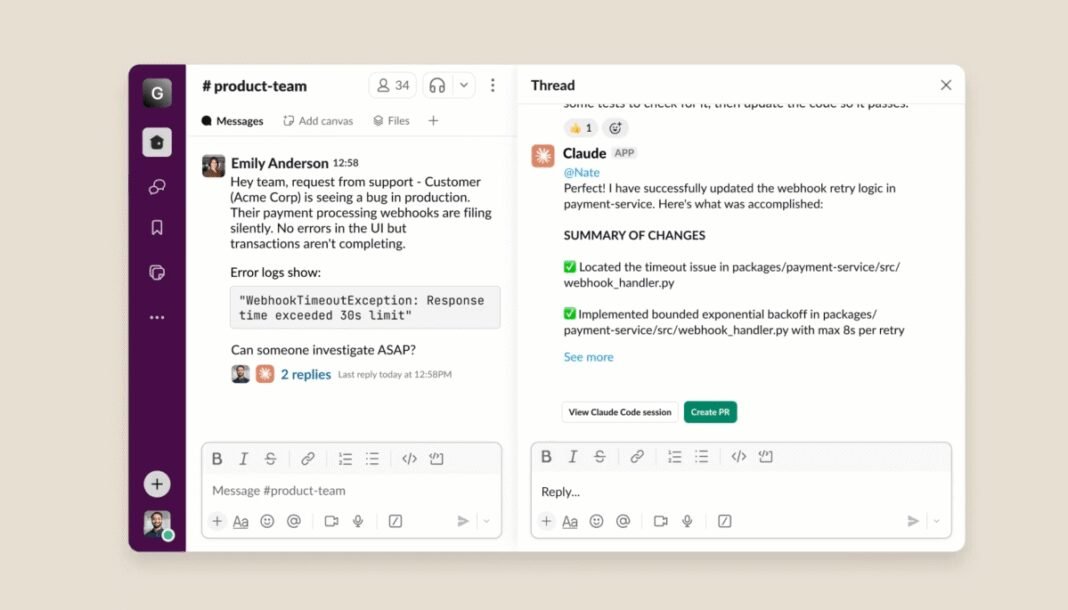

User control remains essential when dealing with sensitive domains such as financial institutions or healthcare portals: explicit consent must be obtained before proceeding with any actions involving confidential information. Similarly, any use of stored credentials managed by Chrome’s password manager requires direct user authorization as AI models do not have access to passwords themselves.

This consent protocol extends further-before finalizing transactions like purchases or sending messages through automated workflows-the browser prompts users for confirmation ensuring clarity and preventing unauthorized operations.

Defending Against Prompt Injection and Other Emerging Threats

The security framework includes a specialized prompt-injection classifier designed to identify and neutralize attempts at manipulating AI instructions maliciously. Continuous testing against adversarial attacks developed by cybersecurity experts helps strengthen these defenses over time.

The Industry-Wide Commitment Toward Safe Autonomous Browsing Experiences

The focus on securing intelligent browser assistants transcends Google alone; other industry leaders are actively developing solutions too.as an example, Perplexity recently launched an open-source tool aimed at detecting prompt injection vulnerabilities in similar AI-driven browsers-a clear indication of growing vigilance around protecting autonomous web interactions amid 2026’s expanding digital ecosystem.