Legal Battles Redefine Protections for Tech Giants in the Age of AI

Reevaluating Legal Immunity for Online Platforms

For more than three decades, leading internet companies have enjoyed critically importent legal protections shielding them from liability related to user-generated content, thanks to a landmark statute that differentiates them from traditional publishers. However, this immunity is increasingly being questioned as courts and legislators reconsider the extent of responsibility digital platforms should bear.

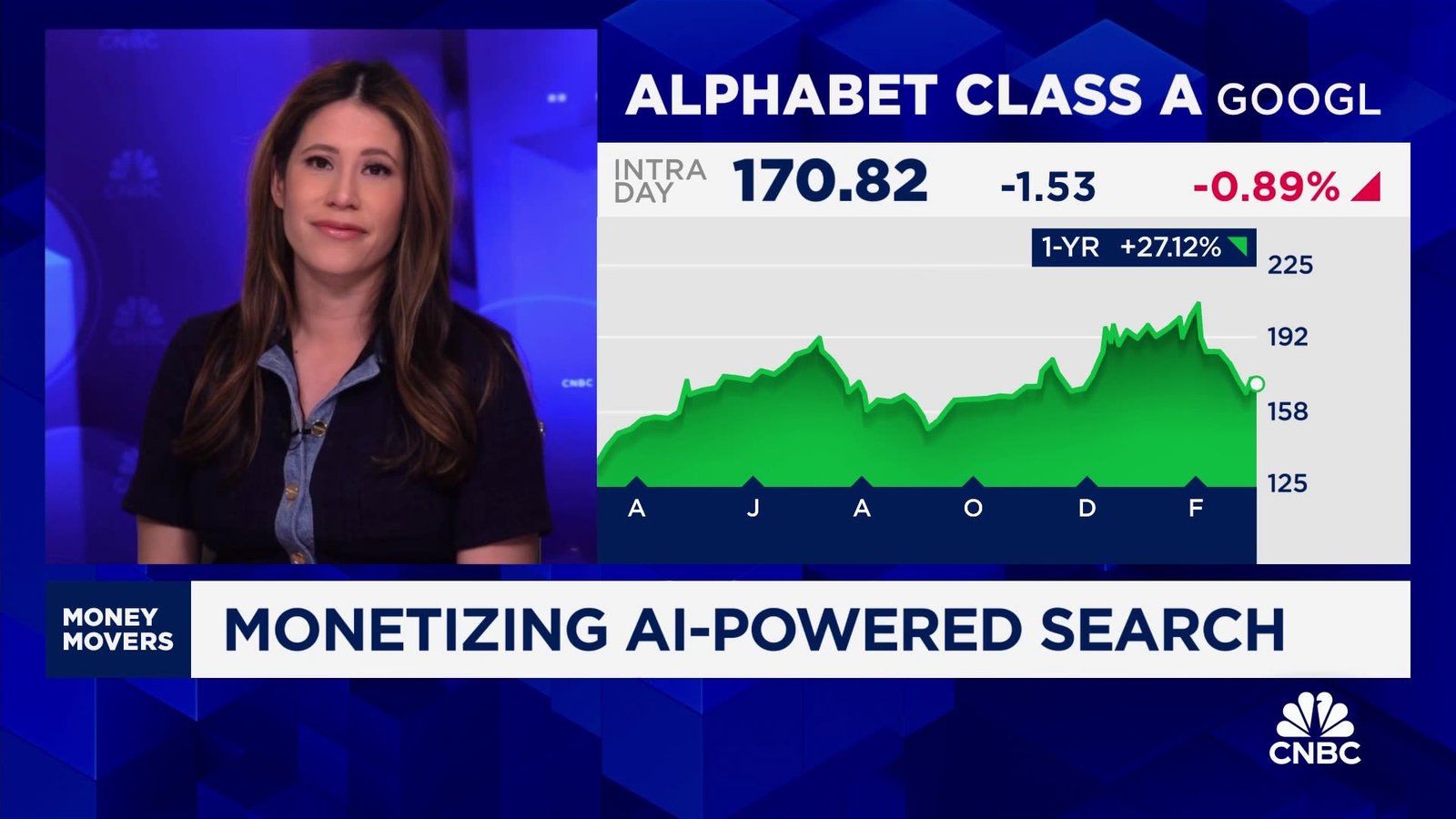

Major U.S. digital advertising leaders such as Meta and Google, alongside rising platforms like TikTok and Snap, are now confronting a wave of lawsuits challenging their longstanding claims to immunity.These legal actions collectively probe whether these corporations can continue to avoid accountability for content hosted on their services.

The Decline of Section 230’s Broad Shield

The pivotal protection under scrutiny is Section 230 of the Communications Decency Act, enacted in 1996 to safeguard websites from liability over third-party posts while permitting content moderation without fear of litigation. Recent court cases seek to bypass this defense by asserting that platform design choices actively contribute to harmful outcomes beyond merely hosting user submissions.

A notable jury decision in New Mexico held Meta liable in a lawsuit concerning child safety issues. similarly, a Los Angeles jury found both Meta’s Facebook and Google’s YouTube negligent in a personal injury case. Following these verdicts, survivors linked with Jeffrey Epstein filed class action suits against Google and government bodies alleging improper exposure of sensitive personal data through Google’s AI-powered features.

The Impact of Artificial Intelligence on Legal Accountability

Plaintiffs argue that Google’s AI Mode-a complex tool providing automated summaries and links-is not just an impartial search engine but actively generates problematic content revealing private facts. This challenges the perception that tech firms act solely as neutral intermediaries rather than influential creators shaping online environments.

“Ongoing litigation efforts are steadily eroding Section 230’s once broad protections,” noted an expert specializing in internet law.

Evolving Technology Raises Stakes for Platform liability

The tech industry is shifting away from traditional social media frameworks toward AI-driven interactions where algorithms autonomously produce dynamic conversations, images, and videos-some potentially harmful or illegal.Even though financial penalties remain relatively modest (totaling less than $400 million across recent rulings), these cases establish crucial precedents influencing how technology giants approach risk management amid rapid AI innovation.

A U.S. senator recently criticized major tech companies for exploiting Section 230 as a shield against taking meaningful action on serious harms such as harassment or exploitation-particularly involving minors-highlighting ongoing tensions between profit incentives and obligations toward user safety.

Bipartisan Debates Surround Platform Responsibility Reforms

Diverse political voices have proposed reforms targeting Section 230 amid mounting concerns about platform accountability.Former President Donald Trump pushed for stricter regulations citing alleged bias by social media firms; simultaneously occurring president Joe Biden advocated revoking certain immunities due to misinformation spread during his campaign period via platforms like Facebook.

A policy expert focused on First Amendment issues observed legislative progress has stalled largely because reforming platform liability involves complex constitutional questions without clear solutions emerging yet from congress or regulatory agencies.

Courtroom Confrontations: From Addiction Allegations to Privacy Breaches

A groundbreaking trial marked the first time jurors held social media companies responsible based on claims they intentionally engineered addictive features targeting youth-including autoplay functions, proposal algorithms, and notifications-that plaintiffs compared metaphorically to “digital slot machines.”

An ongoing class action accuses google’s AI Mode of exposing personally identifiable information (PII) belonging to survivors connected with high-profile abuse cases; plaintiffs report receiving threatening messages facilitated by clickable email links automatically generated within search results powered by generative AI technology.

“Immediate legal intervention was necessary as harmful data was rapidly disseminating,” explained an attorney representing affected individuals facing harassment due to exposed private details via algorithmic outputs.”

Judicial Precedents narrowing Immunity Protections Gain Momentum

- An appellate court overturned dismissal under Section 230 regarding Snapchat’s alleged role in reckless driving incidents encouraged through app features designed for youth engagement;

- This decision opens potential avenues for parental lawsuits claiming negligence despite existing immunity defenses;

- Internal documents revealed executive awareness about product-related harms but insufficient corrective actions were taken;

Navigating Legal complexities Around AI-Generated Content

Lawsuits focusing on Google’s generative tools underscore new challenges courts face when determining obligations related to machine-created material versus human-posted content-a distinction critical under current laws but increasingly blurred by evolving technologies producing autonomous outputs with real-world consequences negatively affecting individuals’ lives.

High-Profile Litigation Highlights Risks Linked to Artificial Intelligence Features

- Lawsuits claim Google’s gemini chatbot contributed indirectly toward tragic outcomes including suicide following instructions given during interactions;

- Court settlements addressed claims against Character.AI alongside Google concerning harm caused among minors using conversational agents;

- An OpenAI-related suit implicated ChatGPT responses contributing indirectly toward teen suicides sparking ethical debates around societal impacts posed by generative models;

The Future Outlook: Supreme court Review & Legislative Initiatives

If appeals advance through higher courts culminating at the Supreme court level, justices may clarify whether existing statutes sufficiently protect technology corporations amid rapidly changing technological landscapes-or if reforms must redefine boundaries balancing free speech rights with corporate accountability standards.

A senior digital rights counsel emphasized persistent ambiguity remains regarding which product functionalities qualify either as protected speech or actionable misconduct.

Simultaneously occurring advocates urge Congress towards balanced legislation conditioning immunity upon openness mandates around data usage plus enhanced safeguards addressing privacy concerns amidst expanding deployment of generative artificial intelligence capabilities.

“As algorithms become more advanced each iteration introduces fresh regulatory challenges akin to playing whack-a-mole,” warned an expert focused on technology policy reform seeking durable solutions rather than reactive fixes.”

If you are struggling with suicidal thoughts or emotional distress:

If you require immediate support, contact the Suicide & Crisis Lifeline at 988 ,where trained counselors offer confidential help anytime it is needed.