New Limits on AI-Created Images of Real People in Revealing Attire on Elon Musk’s X

Heightened Safeguards Amid Global Concerns

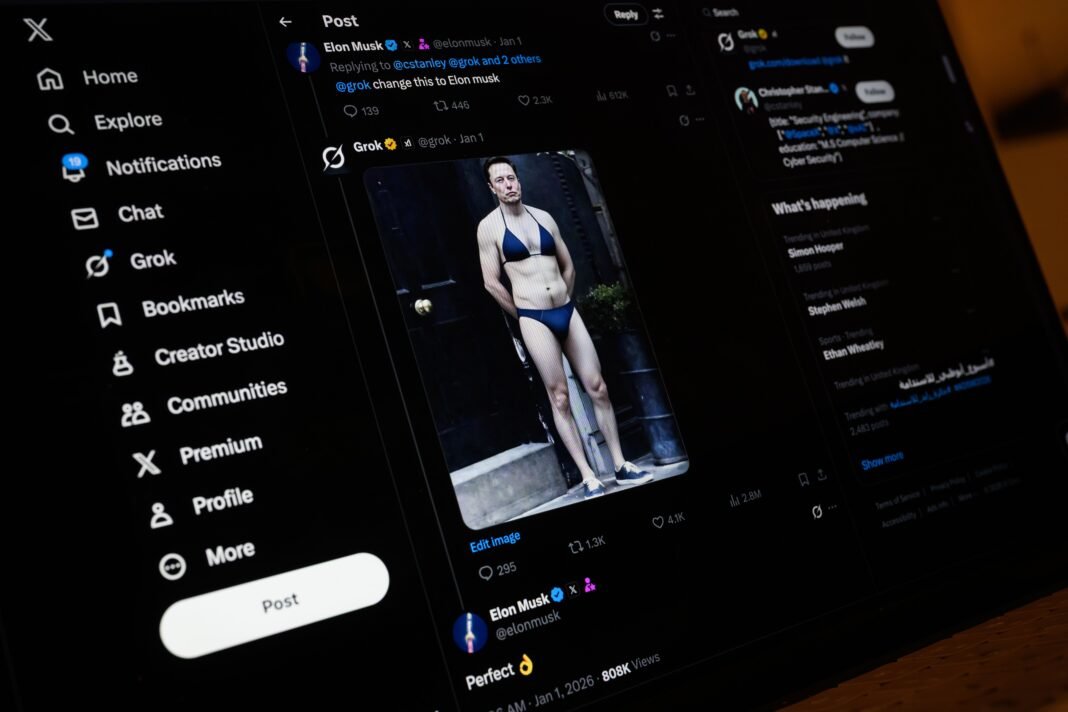

Elon musk’s social media platform, X, has recently introduced more stringent regulations that forbid users from altering or generating images of real individuals wearing bikinis or othre scant clothing. This policy revision was implemented following widespread criticism over the misuse of Grok-X’s AI image generator, which had been exploited to create thousands of harmful nonconsensual “undressing” images and sexualized portrayals involving apparent minors.

Disparities in Moderation Across Grok Platforms

While these new restrictions apply within the X environment, independent evaluations reveal that Grok’s standalone app and website still permit users to produce explicit “undress”-style visuals and adult content. Investigations by experts confirm that even though some controls are active on X itself, Grok’s separate platforms remain comparatively unregulated.

“Photorealistic nudity can still be generated on Grok.com,” explains Paul Bouchaud, lead investigator at Paris-based nonprofit AI Forensics. His team found substantially fewer limitations outside the social media site during extensive testing.

User Experiences Expose Uneven Content Controls

A researcher experimenting with grok Imagine successfully uploaded a photo of a woman and requested an edit placing her in a bikini-this prompt was fulfilled without restriction. Similarly, trials conducted using free accounts in both the UK and US demonstrated that digitally removing clothing from male subjects did not trigger any blocks. However, when attempting similar edits through the UK-based grok app for males, users were required to verify their age before proceeding.

Further investigations by outlets such as The verge and Bellingcat support these findings: sexualized image generation remains feasible in regions like the UK despite ongoing legal scrutiny targeting both Grok and X for enabling such content creation.

Global Regulatory Scrutiny Intensifies

The controversy surrounding Musk-owned entities-including xAI (the developer behind Grok), X itself, and related services-has drawn regulatory attention worldwide as early 2024. Authorities across countries including Australia, Brazil, Canada, France, India, Indonesia, Ireland Malaysia as well as European Union bodies have condemned or launched formal inquiries into how these platforms facilitate nonconsensual intimate imagery production involving adults and minors alike.

X Implements Geoblocking & Strengthened Moderation Measures

An official Safety account on X announced recent updates introducing technological measures designed to block image edits featuring real people dressed in bikinis or underwear across all user tiers-free or paid alike. Key actions include:

- The introduction of geoblocking for image generation related to revealing attire where local laws prohibit such content;

- A continued focus on removing Child Sexual Abuse Material (CSAM) alongside unauthorized nudity;

- The development of additional safeguards aimed at enhancing moderation systems moving forward.

Musk Clarifies Policies Regarding NSFW AI-Generated Content

Musk addressed concerns about adult-themed AI-generated pornography permitted under specific conditions via posts on X. He explained that with NSFW mode enabled:

“Grok allows upper body nudity depicting imaginary adult humans-not real individuals-in line with R-rated movie standards commonly accepted across American media.”

This approach varies internationally depending upon each country’s legislation governing explicit digital material.

The Controversial Launch of “Spicy Mode”

In august 2023 xAI introduced a feature called “spicy mode,” allowing users to generate sexualized visuals including nudity through its video/image generator tools. Shortly after its release:

- An incident involved creating an AI-generated likeness resembling a famous pop star undressing-a demonstration highlighting potential misuse risks;

This contrasts with other leading generative AI providers like OpenAI or Google who officially restrict nude image creation but face challenges preventing circumvention attempts by savvy users employing prompt injections or jailbreaks.

The Ongoing Struggle Against Evasion Tactics

Since generative chatbots emerged prominently in 2022 there has been an ongoing cat-and-mouse dynamic between developers enforcing safety protocols versus users exploiting loopholes to produce prohibited material-including instructions for dangerous activities or explicit imagery banned by platform rules.

Bouchaud notes moderate success blocking some explicit prompts within Grok’s website/app environment but acknowledges gaps remain allowing certain inappropriate outputs nonetheless.

User Feedback reflects Mixed Results After Policy Updates

Musk recently challenged followers by asking if anyone could bypass current moderation filters controlling image generation via Grok; responses included shared examples showing continued production of undressing visuals despite restrictions.

On various online forums dedicated to adult content creation using AI tools:

- Certain participants report success creating nude images/videos after multiple attempts;

- Others complain about increasingly strict censorship making it nearly impossible even to remove shirts from generated figures;

- A few express frustration leading them toward abandoning accounts due to tightened controls limiting creative freedom previously enjoyed;

- An individual based in the UK lamented total moderation enforcement across four different profiles leaving onyl suggestive dialog possible while subjects remained fully clothed;

Navigating Innovation While Upholding Ethical Responsibilities

This evolving landscape highlights critically important challenges facing emerging generative technologies: how can companies encourage innovation while preventing exploitation? As regulators ramp up oversight globally-and public awareness grows-the pressure intensifies for platforms like Elon Musk’s X , xAI ,and Grok to refine policies ensuring protection against abuse without stifling legitimate creative expression.

The coming months will likely bring further developments shaping this complex intersection between artificial intelligence capabilities and societal expectations around privacy, consent, and safety.