in-Depth Insight into MetaS Llama AI Model Series

Meta has introduced its proprietary generative AI lineup called Llama, setting itself apart by adopting an “open” model philosophy. Unlike many prominent AI systems such as Anthropic’s Claude,Google’s Gemini,xAI’s Grok,and various OpenAI ChatGPT versions-which are primarily accessible via APIs-Llama permits developers to download and operate the models directly under defined licensing terms.

The Architecture and Progression of Llama models

Rather than a single entity, Llama represents a suite of refined AI architectures. The most recent release, Llama 4, unveiled in early 2025, includes three unique variants:

- Scout: Activates 17 billion parameters out of a total 109 billion with an extraordinary context window capable of processing up to 10 million tokens concurrently.

- Maverick: Also utilizes 17 billion active parameters but scales up to a massive total of 400 billion parameters and supports a one-million-token context window.

- Behemoth: Currently under development; anticipated to feature an enormous scale with approximately 288 billion active parameters within two trillion total parameters.

(Tokens are the smallest meaningful units in data inputs-for instance,breaking down “innovation” into segments like “in,” “no,” “va,” “tion.”)

The size of the context window determines how much input data the model can process at once when generating responses. Larger windows allow for better understanding over extended conversations or lengthy documents-scout’s capacity roughly equals reading about seventy-five full-length novels consecutively. Though, extremely large context windows may sometimes lead models to overlook safety protocols or produce overly agreeable answers that could mislead users.

Diverse Multimodal Training Data Enhancing Capabilities

Llama 4 was trained on extensive datasets comprising unlabeled text, images, and video content spanning more than 250 languages worldwide. This comprehensive training enables native multimodal comprehension-a notable advancement beyond earlier versions focused mainly on text-only tasks.

The Scout and Maverick models employ mixture-of-experts (MoE) architectures designed for computational efficiency by activating only select subsets (“experts”) during inference: Scout uses sixteen experts while Maverick engages one hundred twenty-eight experts simultaneously. Behemoth is planned as a teacher model that will guide smaller variants through knowledge distillation once fully released.

Core Functional Strengths Across Llama Variants

Llamas excel at numerous generative applications including software coding assistance; solving advanced mathematical problems; summarizing multilingual documents in languages such as Spanish, Mandarin Chinese, russian, Swahili alongside English; plus analyzing complex files like PDFs or spreadsheets. All current Llama 4 editions accept inputs across text formats and also images and videos for richer interaction possibilities.

- Scout: Tailored for managing extensive workflows involving vast datasets requiring deep contextual awareness over long spans.

- Maverick: Strikes balance between reasoning depth and response speed-ideal for chatbot implementations or technical assistants demanding rapid yet precise replies.

- Behemoth: Designed primarily for cutting-edge scientific research where immense parameter counts enable sophisticated modeling power across STEM disciplines.

Llamas can also integrate external tools such as DuckDuckGo Search API enabling real-time fact retrieval on current events; Wolfram Alpha API supporting complex scientific queries; plus embedded Python interpreters allowing live code execution validation-all configurable rather than default features out-of-the-box.

Avenues to Access Meta’s Llama Technology Today

If you seek casual conversational experiences powered by Meta’s ecosystem technologies-Llama drives chatbots embedded within platforms like Facebook Messenger, TikTok, Spark AR Studio, META.ai services deployed across nearly fifty countries globally.

Beyond end-user applications:

– Scout & Maverick are available through developer hubs including Hugging Face integrations,

– Cloud-hosted deployments run on major providers such as AWS,

– Behemoth remains in training stages but is expected soon.

Meta collaborates closely with over thirty hosting partners offering enhanced services built atop core LLaMA capabilities-including proprietary data referencing options or ultra-low latency operations.

Developers must comply strictly with licensing restrictions limiting deployment scope especially if thier applications exceed seven hundred million monthly active users without explicit authorization from Meta.

Ecosystem Tools supporting Safety & Developer Productivity

- llama Guard:A content moderation framework detecting harmful material related to hate speech,self-harm criminal activities,and other sensitive categories customizable per language needs.

- PROMPT Guard: A security layer preventing prompt injection attacks aimed at manipulating model behavior maliciously.

- CybSecEval: An assessment toolkit measuring cybersecurity risks posed by deployed instances focusing on threats like automated social engineering campaigns.

- LLaMA Firewall: A runtime protection system blocking unsafe code execution,prompt injections,and risky tool interactions during operation.

- Coding Shield: This dynamic filter screens insecure code suggestions supporting eight programming languages ensuring safer outputs during inference time.

Navigating Limitations & Ethical Challenges Surrounding LLaMA Use

“Despite its strengths,LlaMa currently demonstrates stronger performance predominantly with English-language inputs.”

An essential consideration involves the origin of training data.Meta controversially incorporated copyrighted e-books alongside publicly scraped internet content-including social media posts-to train these models.A recent legal decision favored Meta citing fair use protections,but ongoing copyright infringement concerns persist if generated outputs reproduce protected works verbatim without permission.

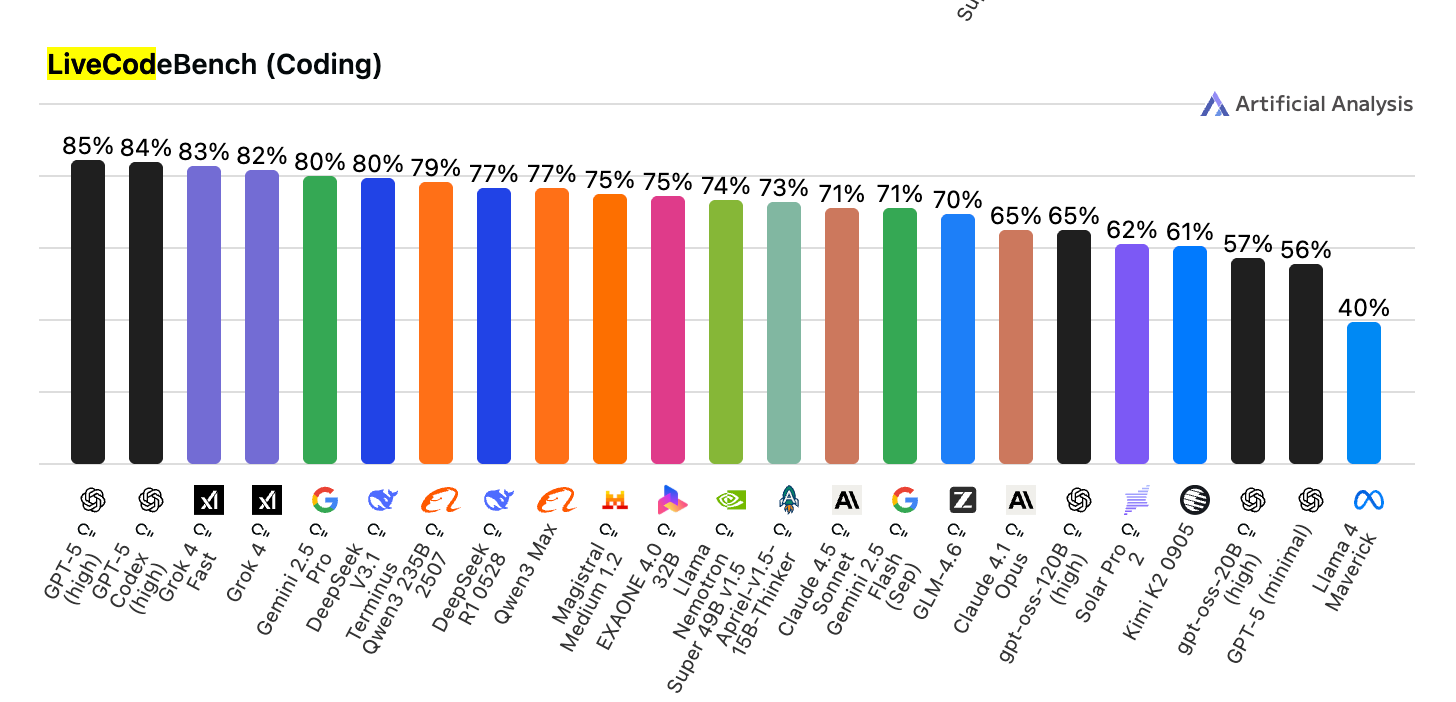

Moreover,LlaMa-generated programming solutions tend toward higher error rates compared against competitors.On recent LiveCodeBench tests,Maverick achieved approximately forty-two percent accuracy versus GPT-5 scoring above eighty-seven percent.This highlights the critical need for expert human review before deploying any automatically generated software components.

generative AIs-including all iterations of LlaMa-continue facing hallucination issues producing plausible yet incorrect information across domains ranging from legal advice,to emotional chatbot interactions.This phenomenon necessitates cautious interpretation when relying upon their outputs in high-stakes scenarios.