The Evolution of cerebras systems: From Near Failure to AI Chip Innovator

Transforming AI Hardware wiht an Enterprising Concept

Cerebras Systems introduced a revolutionary idea that defied long-standing semiconductor industry practices. Rather of continuing the trend of shrinking CPUs and packing more transistors onto smaller chips, they pursued the creation of a massive single chip by utilizing an entire silicon wafer as one integrated processor. This novel design promised remarkable computational power for artificial intelligence tasks, removing the complexity and latency involved in connecting multiple smaller chips.

Conquering Extraordinary Technical Obstacles

The vision was bold, but manufacturing such a gigantic chip had never been accomplished before. The engineering challenges were formidable: delivering consistent power, managing heat dissipation effectively, and ensuring seamless data flow across a wafer 58 times larger than standard chips pushed existing fabrication technologies to thier limits. Traditional cooling methods and packaging solutions simply did not exist at this scale.

Solving the Packaging Enigma

Following collaboration with TSMC on chip design, Cerebras encountered their most daunting hurdle-packaging. Mounting this enormous silicon wafer onto circuit boards without causing damage while maintaining efficient power delivery and thermal regulation required innovative approaches. The team invested significant time and resources experimenting thru trial-and-error processes, sacrificing numerous prototypes to discover workable techniques.

A Critical Turning Point Amid Financial Hardship

By mid-2019, after expending nearly $200 million at approximately $8 million monthly burn rate, Cerebras faced imminent collapse. Despite frequent setbacks reported to investors, CEO Andrew Feldman remained resolute that failure was not an option for survival.

“Continuing forward was our only choice; stopping meant extinction,” Feldman recalled reflecting on those pivotal months.

The breakthrough arrived when custom machinery capable of delicately securing wafers without cracking them was developed-machines able to fasten dozens of screws simultaneously-and novel cooling systems tailored specifically for their unique architecture were engineered.

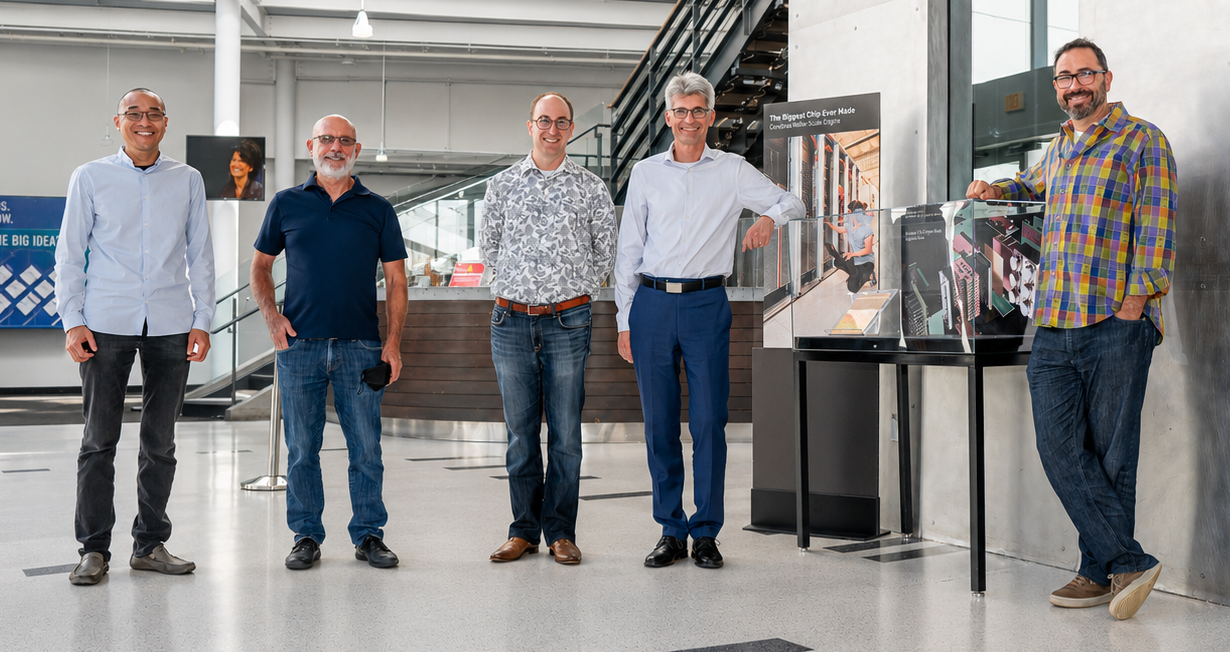

A Landmark Achievement That Redefined Possibilities

The moment they powered up their fully assembled wafer-scale engine marked a historic victory against overwhelming odds. The founding team watched silently as indicator lights flickered alive-a far more exhilarating experience than any typical computer startup sequence-as it symbolized years of relentless dedication finally bearing fruit.

Strategic Collaborations Fueling Market Expansion

Cerebras’ pioneering technology quickly drew interest from influential organizations like OpenAI early in its journey; although acquisition talks occurred initially but fell through due to internal leadership disagreements within OpenAI at that time. Presently though, OpenAI serves both as customer and strategic partner-having extended a $1 billion loan secured by warrants potentially worth billions based on current valuations exceeding $60 billion post-IPO.

This alliance includes exclusivity provisions temporarily restricting Cerebras from selling certain products to competitors such as Anthropic-a measure primarily intended for prioritizing capacity allocation rather than imposing permanent market limitations according to company statements.

A Measured Growth Strategy Amid Rising Demand

With global spending on AI inference hardware projected above $30 billion annually-and expected growth accelerating rapidly-Cerebras adopts a cautious approach toward expanding its client base instead of scaling too quickly risking operational strain or dilution of service quality.CEO Feldman compares this strategy metaphorically:

“Think about entering an all-you-can-eat buffet; rather than sampling every dish simultaneously risking overwhelm or wastefulness-we focus first on mastering select offerings before broadening our reach.”

Pioneering Future Frontiers in AI Computing Technology

Cerebras’ story exemplifies how visionary engineering combined with unwavering persistence can push technological boundaries even under severe financial pressure conditions. As artificial intelligence models continue growing exponentially-with some recent language models containing hundreds of billions parameters-the demand for specialized hardware like wafer-scale engines becomes increasingly vital for efficient training and inference worldwide.

- 2024 Industry Update: Worldwide investment in AI-specific chips is forecasted to exceed $45 billion this year alone due to rapid adoption across diverse sectors including medical imaging diagnostics and autonomous transportation systems.

- Tangible Use Case: Top-tier research institutions now utilize cerebras platforms in complex simulations requiring ultra-fast parallel processing capabilities unattainable by conventional GPUs or multi-chip CPU arrays linked via networking layers.

- Sustainability Contribution: By integrating advanced liquid cooling solutions designed explicitly for large-scale silicon wafers rather of relying solely on energy-intensive air conditioning common in traditional data centers-their innovations support greener computing infrastructures globally reducing carbon footprints significantly compared with legacy setups.