Google’s Gemini 3 Flash: Setting a New Standard in AI Efficiency and Speed

Google has introduced its cutting-edge AI model, Gemini 3 flash, engineered to provide lightning-fast responses while maintaining cost-effectiveness. Building on the foundation of last month’s Gemini 3 release, this iteration aims to surpass competitors such as OpenAI by delivering superior speed and efficiency. Currently, Gemini 3 Flash serves as the primary engine behind Google’s gemini app and powers AI-driven search functionalities globally.

important Advances in Performance Metrics

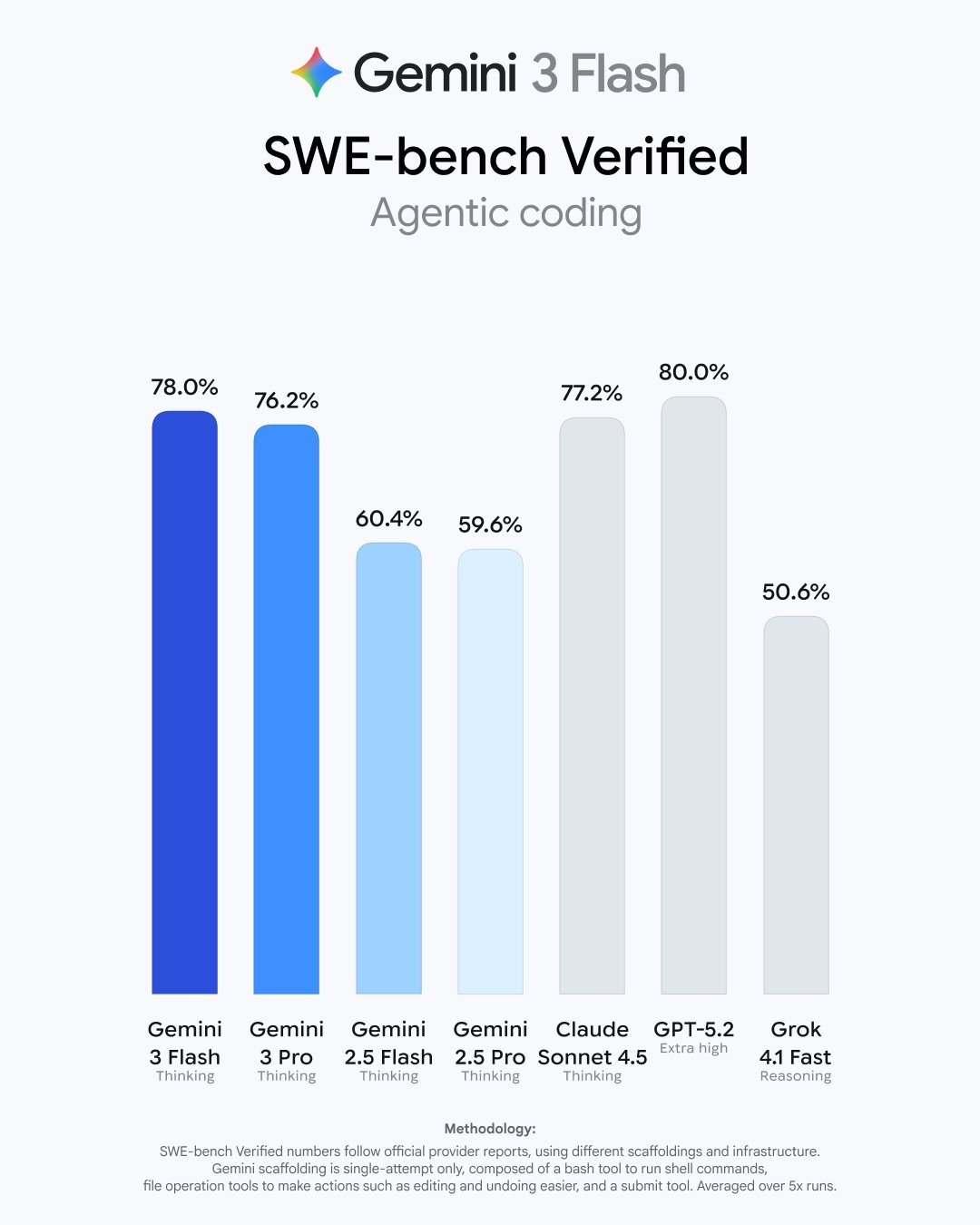

the debut of Gemini 3 Flash arrives just half a year after Google rolled out the enhanced gemini 2.5 flash mode, marking a considerable leap in AI capabilities. In extensive benchmark tests, this latest model not only outperforms its predecessor but also competes closely with leading models like GPT-5.2 and Gemini 3 Pro across multiple evaluation criteria.

As an example, on the Humanity’s Last Exam benchmark, which measures cross-disciplinary knowledge without external tools, Gemini 3 Flash scored an notable 33.7%. This is nearly on par with GPT-5.2’s 34.5%, while significantly exceeding earlier versions such as gemini 2.5 flash that managed only 11%. Additionally, during the multimodal reasoning challenge MMMU-Pro-which tests comprehension across text, images, and other data types-Gemini 3 Flash led all contenders with an outstanding accuracy rate of 81.2%.

A Multifaceted Model Tailored for Everyday Use

The integration of Gemini 3 Flash as the default option within Google’s consumer applications means users automatically benefit from advanced multimodal processing capabilities. Whether it involves submitting short video clips for feedback or uploading audio recordings for analysis or quiz creation purposes, this model excels at interpreting diverse input formats seamlessly.

This adaptability extends into creative domains too: users can sketch rough illustrations to receive instant identification or obtain detailed visual outputs featuring images and tables that align precisely with their queries’ intent.

User-Centric applications Demonstrating Versatility

- A travel blogger uploads drone footage from a recent trip to get personalized editing suggestions based on scene analysis.

- An aspiring musician records vocal exercises; the system offers pitch correction advice alongside customized practice quizzes derived from their performance.

- An entrepreneur drafts business concepts through prompts within the gemini app to rapidly prototype ideas without needing programming skills.

Enterprise Adoption & Developer empowerment

the reach of Gemini 3 Flash extends well beyond individual consumers into corporate environments where firms like JetBrains and Figma have incorporated it via Vertex AI along with Google’s enterprise solutions platform.

Developers gain early access through API previews coupled with tools such as Antigravity-Google’s newly launched coding assistant-facilitating accelerated progress cycles powered by sophisticated code comprehension features.

The Pro version achieves an extraordinary 78% score on SWE-bench verified coding assessments-second only to GPT-5.2-and is optimized for tasks including video content interpretation,automated data extraction workflows,and interactive visual Q&A sessions due to its remarkable speed combined with precision.

Evolving Pricing Reflecting Enhanced Capabilities

The pricing framework positions Gemini Flash competitively: input tokens are charged at $0.50 per million while output tokens cost $3 per million-a slight increase over previous rates ($0.30 input / $2.50 output). This adjustment is justified by significant improvements including triple faster processing speeds compared to earlier models plus approximately thirty percent fewer tokens consumed during complex reasoning tasks.

“Flash acts as our dependable powerhouse,” stated a senior product lead during an internal briefing.

“Its balance between affordability and performance makes it ideal for large-scale processing needs across various industries.”

Navigating Intense Competition Amid Rapid technological Progression

The rivalry among major technology companies remains fierce; since launching prior iterations last year alone,

demand has surged dramatically-with over one trillion tokens processed daily via Google’s API services worldwide-a clear indicator of escalating competition against OpenAI’s platforms.

“the momentum driving innovation industry-wide propels every participant forward,” remarked leadership at google.

“Emerging benchmarks push us all toward breakthroughs that ultimately benefit end-users.”

This competitive environment has also fueled rapid advancements from OpenAI-including releases like GPT-5.2 paired with new image generation technologies-and reported increases in ChatGPT usage volumes growing eightfold since late last year highlight intensifying market dynamics surrounding generative AI globally.

The Future Path: Ongoing Refinement Through Collaboration & Rigorous Evaluation

The continuous advancement of large language models hinges not only on technological innovation but also collaborative benchmarking initiatives that standardize evaluation methods across platforms-ensuring transparency about strengths while pinpointing areas ripe for advancement moving forward into next-generation applications spanning education,

enterprise productivity,

and creative sectors alike.