Arcee AI Introduces Trinity: A Groundbreaking Open-Source Foundation Model

Emerging Player Disrupts the AI Industry

In a market largely dominated by tech giants such as Google, Meta, Microsoft, adn Amazon-alongside prominent model developers like OpenAI and Anthropic-a nimble startup is charting its own course. Arcee AI, a compact team of just 30 experts, has unveiled Trinity: an open-source foundation model released under the Apache licence. Boasting an extraordinary 400 billion parameters, trinity stands as one of the largest open-source models ever created by a U.S.-based company.

Performance That Rivals Established Models

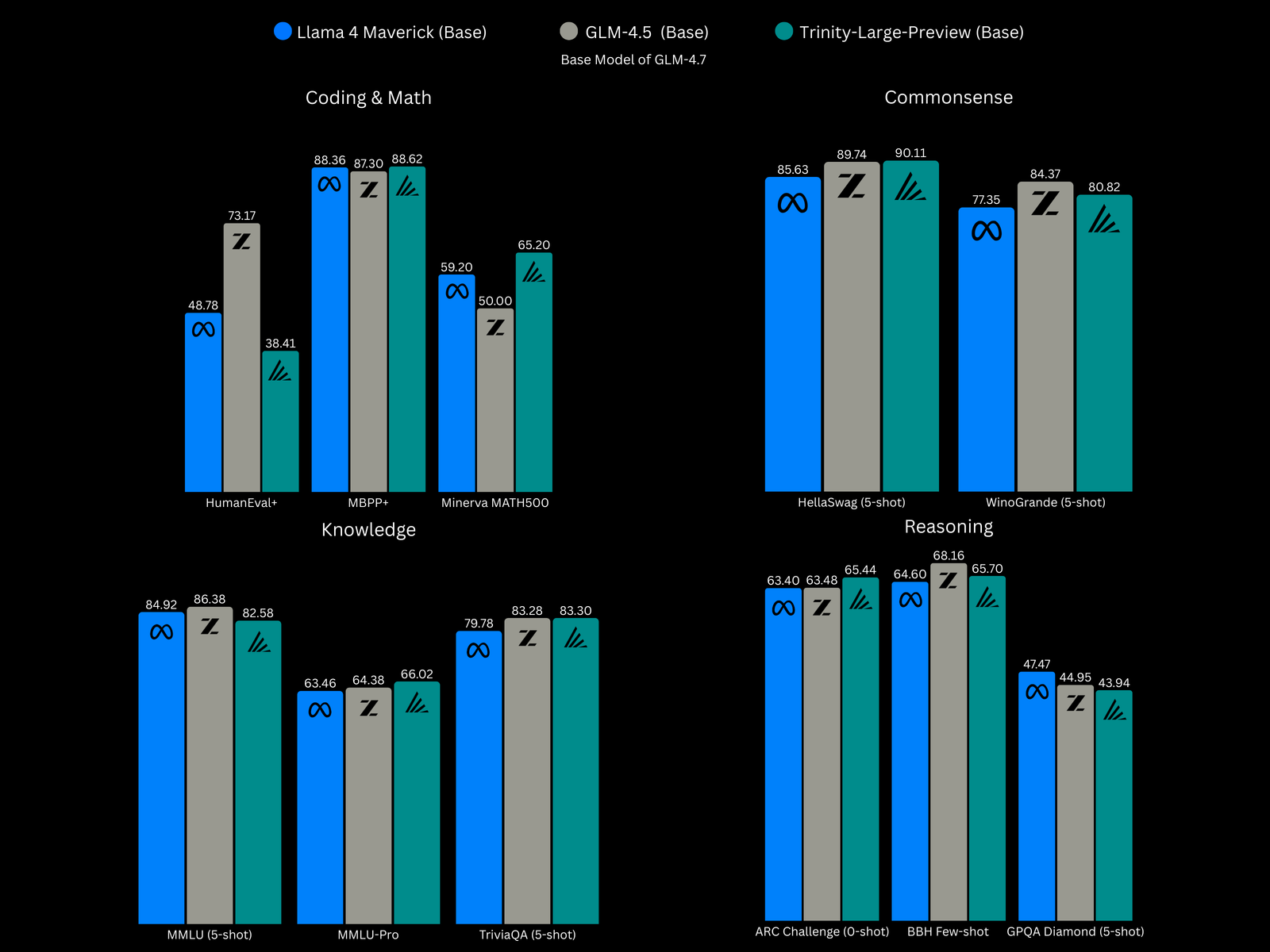

Internal evaluations reveal that Trinity’s base models-with minimal fine-tuning-match or exceed the capabilities of leading competitors like Meta’s llama 4 Maverick 400B and China’s Tsinghua University GLM-4.5. These assessments span critical areas including programming skills, mathematical problem-solving, commonsense reasoning, knowledge retention, and logical inference.

Current Strengths and Planned Enhancements

Trinity is primarily engineered to assist with coding tasks and manage intricate multi-step workflows such as autonomous agent operations. Unlike some cutting-edge rivals supporting multiple input types-including images and audio-Trinity currently processes text exclusively. However, future updates aim to incorporate vision-based understanding alongside speech-to-text functionalities.

A Obvious Alternative for Developers

The creators at Arcee prioritize building trust within developer communities and academia by offering an openly accessible foundation model free from restrictive licensing or geopolitical entanglements often associated with foreign-developed solutions. This strategy notably appeals to U.S.-based organizations seeking reliable open-source options without dependence on Chinese-originated technologies.

“To earn genuine developer loyalty,” explains CTO Lucas Atkins,

“you must provide the most capable open-weighted model available.”

The Making of Trinity: Innovation on a Lean Budget

The rapid advancement timeline is remarkable given Arcee’s limited resources compared to industry titans: all three iterations of their foundational models were trained within six months using $20 million worth of compute power distributed over 2,048 Nvidia blackwell B300 GPUs-a fraction of their total $50 million funding pool.

This swift progress was driven by deep expertise in voice agent technology prior to venturing into large language modeling territory. The team’s commitment involved long hours fueled more by passion than vast financial backing typical at larger labs investing hundreds of millions annually in similar projects.

Evolving From Custom Solutions To Proprietary Foundations

Initially specializing in fine-tuning existing open-source architectures such as Llama or Mistral for enterprise clients-including major telecommunications firms-the company shifted focus toward creating proprietary foundational technology when client needs surpassed customization capabilities alone.

This pivot was partly motivated by growing concerns over reliance on third-party providers amid escalating geopolitical tensions restricting access to certain foreign-developed technologies within U.S enterprises.

Permanently Open Licensing Sets Them Apart

A defining feature is Arcee’s adoption of the Apache license ensuring permanent openness-a stark contrast with Meta’s Llama series which employs more restrictive licenses limiting commercial use that have sparked debate about true adherence to open source principles among community advocates.

“The United States requires a frontier-grade alternative that remains permanently accessible under permissive licensing,” states CEO mark McQuade.

“That necessity inspired us to build Trinity.”

Diverse Model Variants Designed For Varied Needs

- Trinity Large Preview: A lightly post-trained instruct variant optimized for following human commands; ideal for conversational applications;

- trinity Large base: The unaltered base architecture without additional training;

- trueBase: A pristine version devoid of instruct data or assumptions tailored specifically for researchers or enterprises requiring full control over customization processes.

The entire suite is freely downloadable today while hosted API services are anticipated soon with competitive pricing aligned with industry standards-for example starting around $0.045 per thousand tokens processed-with limited free usage during initial rollout phases.

The Wider Meaning and future Outlook

This development underscores how smaller startups can make vital contributions despite resource disparities compared to tech behemoths investing billions annually into artificial intelligence research worldwide-the global investment in AI reached nearly $100 billion in early 2024 alone according to market analysts-and highlights increasing demand among organizations seeking transparent alternatives unburdened by geopolitical risks or opaque licensing terms.

If widely adopted across academia and industry-as witnessed previously when platforms like HuggingFace democratized access-it could transform perceptions regarding who controls foundational AI technologies going forward while fostering innovation through unrestricted global collaboration.