Enhancing Clarity in Deep Learning: The Emergence of Transparent Large Language Models

understanding the decision-making mechanisms within deep learning frameworks continues to be a meaningful hurdle. Challenges such as addressing biases in AI-generated political narratives, curbing overly agreeable responses from conversational agents like ChatGPT, and reducing hallucinations prevalent in massive neural networks with billions of parameters highlight the complexity of interpreting thes systems.

Revolutionizing Explainability in AI Systems

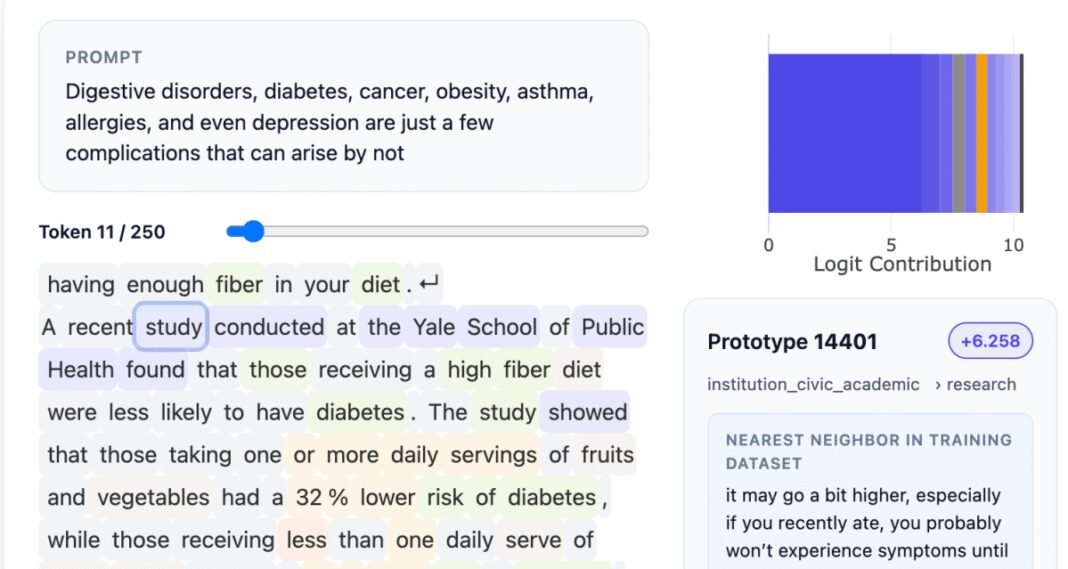

A pioneering approach has been introduced by Guide Labs, a tech startup based in San Francisco. Their open-source large language model (LLM), steerling-8B, which contains 8 billion parameters, features an innovative design that allows every token produced by the model to be directly linked back to its original training data source.

This capability not only facilitates verification of factual content but also enables deeper exploration into how the model processes subtle elements such as humor or constructs around gender identity. Such transparency is essential for fostering trust and accountability within artificial intelligence applications.

Building Interpretability Into Model Architecture

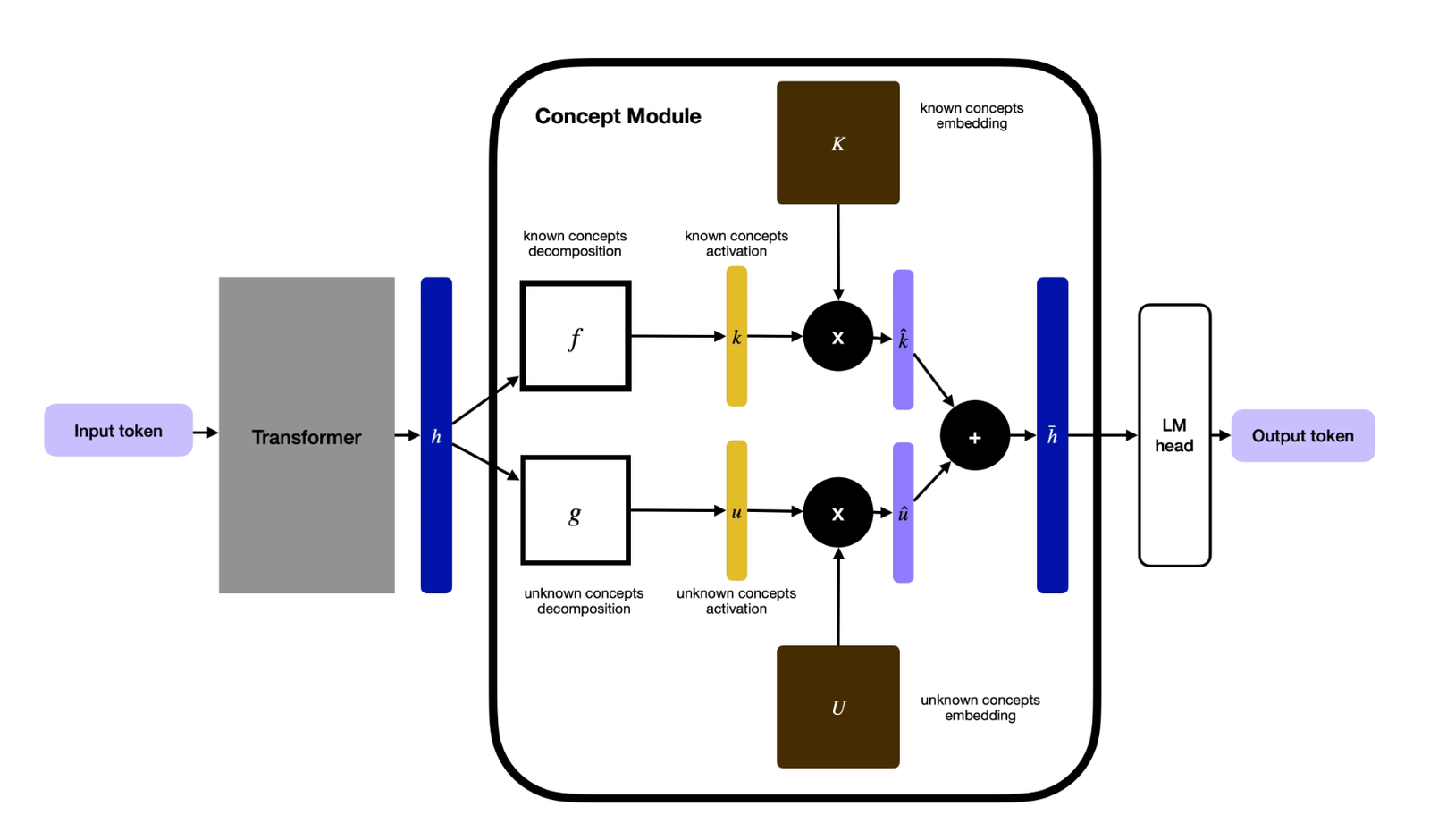

The core innovation involves integrating a conceptual layer inside the LLM that organizes training data into distinct categories. Although this requires ample initial annotation efforts, leveraging supplementary AI tools has made scaling feasible. Unlike conventional interpretability methods-which often analyze black-box models retrospectively akin to neuroscientific probing-Guide Labs embeds explainability directly into the system’s foundation from inception.

“Rather than attempting to reverse-engineer understanding through post-hoc neuroscience-like techniques on existing models, we embed interpretability as a essential feature from day one,” explains their CEO.

Navigating Between Transparency and Creativity

A frequent concern regarding highly interpretable architectures is whether they might hinder emergent behaviors-the spontaneous generalizations and inventive outputs that make large language models so valuable. Steerling-8B dispels this worry by autonomously identifying “discovered concepts,” such as complex subjects like quantum computing learned during training, demonstrating that controlled transparency does not stifle innovation within these systems.

Real-World Benefits Across Diverse Sectors

- User-Focused Solutions: Improved oversight over generated content helps prevent unauthorized use of copyrighted works and better manages sensitive topics including violence or substance abuse online.

- Highly Regulated Industries: In domains like healthcare and finance where fairness is critical, interpretable LLMs allow precise filtering-as an example ensuring loan approval algorithms fairly evaluate financial history without bias related to race or gender identity.

- scientific Advancements: Research areas such as drug finding gain significantly when AI reasoning processes are transparent; scientists require detailed explanations behind promising molecular predictions rather than opaque outputs alone.

The Path Forward: Scaling Transparent Models While Maintaining Efficiency

The team at Guide Labs emphasizes that developing inherently understandable deep learning models has shifted from theoretical exploration toward practical engineering solutions. Their current version achieves roughly 90% of the performance seen in larger parameter-count counterparts while demanding less training data due to its streamlined design principles.

This efficiency paves the way for wider adoption across various fields without sacrificing reliability or ethical considerations. Following successful funding rounds totaling $9 million led by Initialized Capital after graduating Y Combinator’s accelerator program, guide Labs aims to expand with larger-scale models alongside API services enabling interactive agent capabilities for developers globally.

“Our present approaches for training deep learning architectures remain primitive,” states their CEO. “By democratizing built-in interpretability today-even before superintelligent agents emerge-we ensure future machine decisions won’t remain hidden behind opaque layers.”