How Nvidia’s Networking Division is Shaping the Future of AI Data Centers

Visionary Beginnings and Strategic Growth

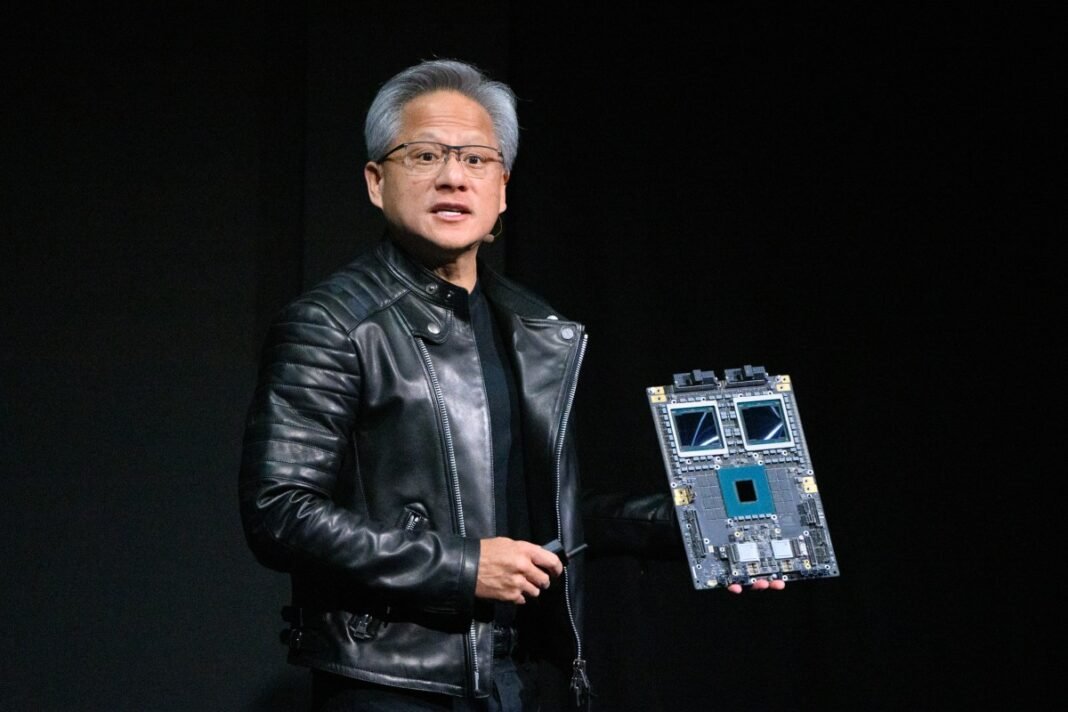

Years before artificial intelligence became a dominant force, Nvidia’s CEO Jensen Huang anticipated the need for specialized hardware tailored to AI workloads. As early as 2010, he advocated for developing chips optimized specifically for AI tasks, well ahead of the explosive demand seen today.A pivotal moment came in 2020 when Nvidia acquired Mellanox Technologies, an Israeli networking innovator established in 1999. This acquisition set the stage for what has rapidly become one of Nvidia’s most dynamic and lucrative business units.

The Surge of a Revenue Giant

Nvidia’s networking segment has quickly risen to become its second-largest revenue contributor after its core GPU compute business.In recent quarters alone, this division generated approximately $11 billion-a remarkable year-over-year growth rate exceeding 260%-and now accounts for over $31 billion annually. This rapid expansion places it on par with or even ahead of long-established industry leaders such as Cisco during comparable periods.

Technological Foundations Driving Growth

This division integrates state-of-the-art technologies critical to modern data centers that support large-scale AI training and deployment. Innovations include NVLink technology enabling ultra-fast interaction between GPUs within server racks; InfiniBand switches that facilitate advanced in-network computing capabilities; Spectrum-X Ethernet platforms engineered specifically to handle intensive AI workloads; and co-packaged optics switches designed to boost data transmission efficiency while reducing latency.

The Crucial Role of Networking in Modern AI Systems

Kevin Deierling, senior vice president responsible for networking at Nvidia and former Mellanox leader, highlights how networking transcends traditional roles: “Networking today is no longer just about peripheral connections-it forms the essential backbone supporting complex AI operations.” This conversion reflects how data centers have evolved from simple clusters into highly integrated ecosystems where uninterrupted data flow is vital.

Deierling acknowledges that despite its significant impact, awareness around nvidia’s leadership in networking remains limited-largely due to marketing challenges rather than any shortcomings in technology. The company distinguishes itself by delivering comprehensive full-stack solutions instead of isolated components-a strategy that enhances synergy between gpus and network infrastructure through global partnerships.

an Integrated Ecosystem Strategy

nvidia sets itself apart by offering tightly coupled hardware-software stacks designed from silicon up through system architecture layers. This holistic approach enables customers to efficiently build scalable “AI factories” capable of handling massive computational demands with optimized performance across all levels.

Pioneering Innovations Powering next-Gen Supercomputers

- Nvidia Rubin Platform: A cutting-edge suite featuring six new chips crafted specifically to accelerate large-scale model training workloads with improved energy efficiency.

- Nvidia Inference Context Memory Storage: Enhances real-time inference pipelines by boosting decision-making speed during model execution phases.

- Spectrum-X Ethernet Photonics Switches: Provide high-speed optical connectivity tailored for demanding AI applications while minimizing latency and power consumption considerably compared to previous generations.

The Transformative Impact on Computing Architectures

“Today’s networks are not mere peripheral links-they serve as the foundational fabric enabling intricate computations,” explains Deierling. “They function like a nervous system within an ‘AI factory,’ orchestrating vast volumes of data movement essential for training refined models.”

This paradigm shift illustrates why integrating high-performance networking with GPU computing unlocks unprecedented potential across industries-from autonomous vehicle growth platforms processing terabytes per second; natural language processing systems powering real-time translation services used globally by millions daily; to climate modeling simulations requiring enormous datasets moving seamlessly without bottlenecks.

A Glimpse Ahead: Networking as a Defining Competitive Edge

Nvidia’s foresight combining GPU advancements with next-level networking positions it uniquely amid worldwide investments surpassing $150 billion annually dedicated solely toward building specialized AI infrastructure. As enterprises race toward deploying ever-larger neural networks demanding seamless dataset transfers across distributed environments, companies providing end-to-end integrated solutions will dominate market share growth throughout this decade and beyond.