Osaurus: Transforming Local AI Model Management on Apple Devices

Empowering Personal AI wiht seamless Integration

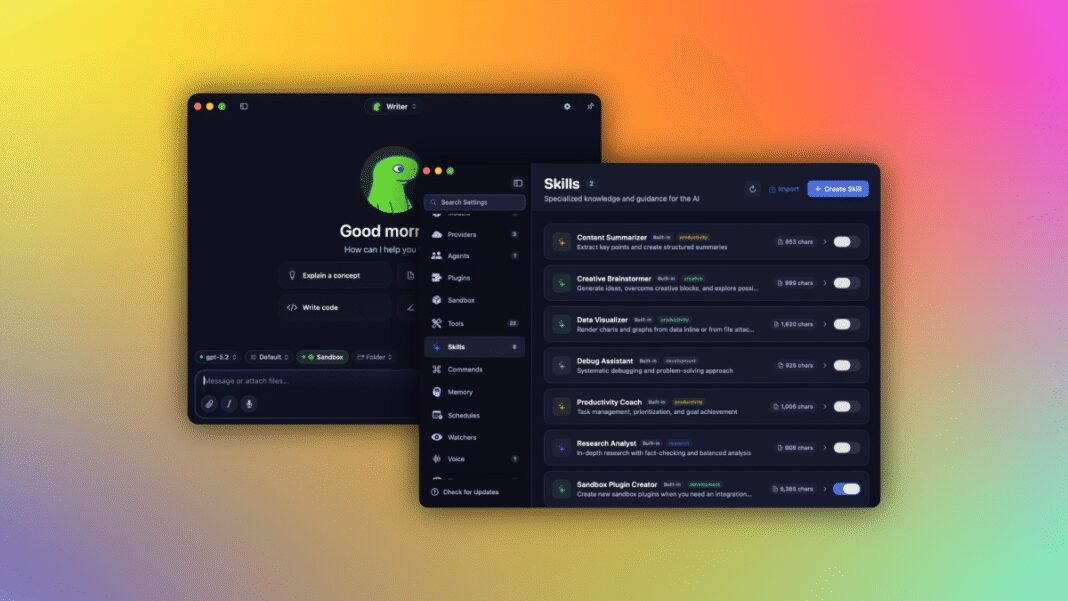

The rise of artificial intelligence models as accessible tools has shifted attention toward creating advanced software frameworks that integrate these models smoothly. Osaurus stands out as an innovative open-source LLM server tailored specifically for Apple devices, enabling users to effortlessly toggle between local and cloud-based AI models while retaining complete control over thier data and applications on their own hardware.

Origins of Osaurus: From Desktop Assistant to Robust Local AI solution

Initially inspired by the idea behind Dinoki-a desktop assistant reminiscent of a modernized “AI helper” similar to early digital assistants-Osaurus was born from concerns about the recurring expenses tied to token-based billing common among many AI service providers. This motivated its creators to explore fully local AI operation without ongoing fees.

Terence Pae, formerly a software engineer at Tesla and Netflix, envisioned an intelligent assistant running entirely on Mac systems. his goal was a privacy-focused tool capable of navigating files,interacting with web browsers,and managing system settings directly within the user’s device environment-offering autonomy absent in cloud-dependent alternatives.

User-Friendly Open-Source Design with Strong Security Measures

Pae chose an open-source approach for Osaurus, fostering continuous betterment thru community input. Unlike other developer-centric platforms such as openclaw or Hermes-which often require command-line skills and may expose security risks-Osaurus delivers an intuitive interface designed for everyday users.

Security remains a cornerstone; each model runs inside isolated virtual sandboxes embedded in the hardware itself. This containment limits potential vulnerabilities by restricting access scopes,ensuring personal details stays protected even when multiple complex AI workflows operate simultaneously.

A Unified Control Layer: Adaptability without Compromise

Acting as a centralized management layer, Osaurus connects various large language models (LLMs) alongside diverse tools within one platform. Users can select specialized models based on task needs-for instance, switching between one optimized for coding assistance and another fine-tuned for natural language comprehension-all while preserving data sovereignty and maintaining high performance standards.

Navigating Hardware Demands: Performance Meets Practicality

The computational intensity required by advanced local LLMs is significant; recommended configurations currently start at 64 GB RAM for moderate use cases and scale up beyond 128 GB RAM when deploying larger architectures like DeepSeek v4 variants. however, ongoing advancements in efficiency metrics such as intelligence per watt indicate that broader accessibility will improve rapidly in coming years.

“Local AI capabilities have evolved remarkably-from struggling with simple sentence completions last year to now handling complex tasks like automated coding or dynamic web navigation,” reflected Pae on recent progress.”

Sustainable Intelligence On-Premises: A Greener future

This shift reduces dependence on massive cloud infrastructures that consume enormous energy worldwide-a critical concern amid global sustainability initiatives. Instead of scaling endlessly via remote servers, organizations can deploy compact yet powerful Mac Studios locally that deliver comparable functionality more efficiently while safeguarding privacy standards essential in sectors such as healthcare or legal services.

Diverse Model Compatibility Enhances User Experience

- Locally Hosted Models: Including MiniMax M3 series replacements like NovaM 5.0, Aurora 4X variants replacing Gemma 4 legacy versions; Qwen4 series; GPT-OSS forks including new Alpaca derivatives;

- Apple Native Foundation Models: Utilizing macOS-optimized frameworks designed specifically for seamless integration;

- LFM Family: Lightweight mobile-style options from LiquidAI’s latest releases;

- Cloud-Based Integrations: Support extended to OpenAI’s GPT-4 series updates, Anthropic’s claude+ family enhancements; Google DeepMind’s Gemini successors along with xAI/grok innovations plus Venice platform expansions;

This broad compatibility empowers users to customize their workflows precisely according to project demands without being locked into proprietary ecosystems common elsewhere.

MCP Server Architecture & Expansive Plugin Ecosystem

The MCP (Model Context Protocol) compliant server architecture allows any compatible client seamless access not only to core functionalities but also integrated plugins covering email management, calendar coordination, image recognition, macOS utilities, XLSX/PPTX document processing, browsing capabilities, audio playback, Git version control, text search ,and over twenty additional native extensions designed to boost productivity directly within the platform environment.

A Thriving Community Driving Continuous Innovation

Soon after its debut nearly one year ago,Osaurus surpassed 112K downloads , reflecting strong enthusiasm among developers and end-users seeking localized solutions amid growing concerns about data privacy breaches linked with cloud-only platforms.

The founding team remains actively engaged in startup accelerators based out of New York City while exploring enterprise applications targeting industries demanding strict confidentiality safeguards such as law firms or medical institutions where hosting sensitive data onsite mitigates compliance risks inherent in third-party clouds.

“The rapid expansion witnessed across public cloud AIs requires massive infrastructure investments,” noted Pae.

“We believe many have yet realized how much value lies within efficient localized deployments operating off-premises.”

A Vision Toward Decentralized Intelligence Infrastructure

< p > By empowering individuals and organizations alike < em >to run powerful large language model assistants natively from personal Macs , < / em >the reliance on sprawling centralized data centers could significantly decrease. This transition promises reduced operational expenses , faster responsiveness due to proximity , plus improved environmental impact thanks smaller power consumption footprints . < / p >