Google Cloud Launches Advanced Eighth-Generation AI Processors with Dual Functions

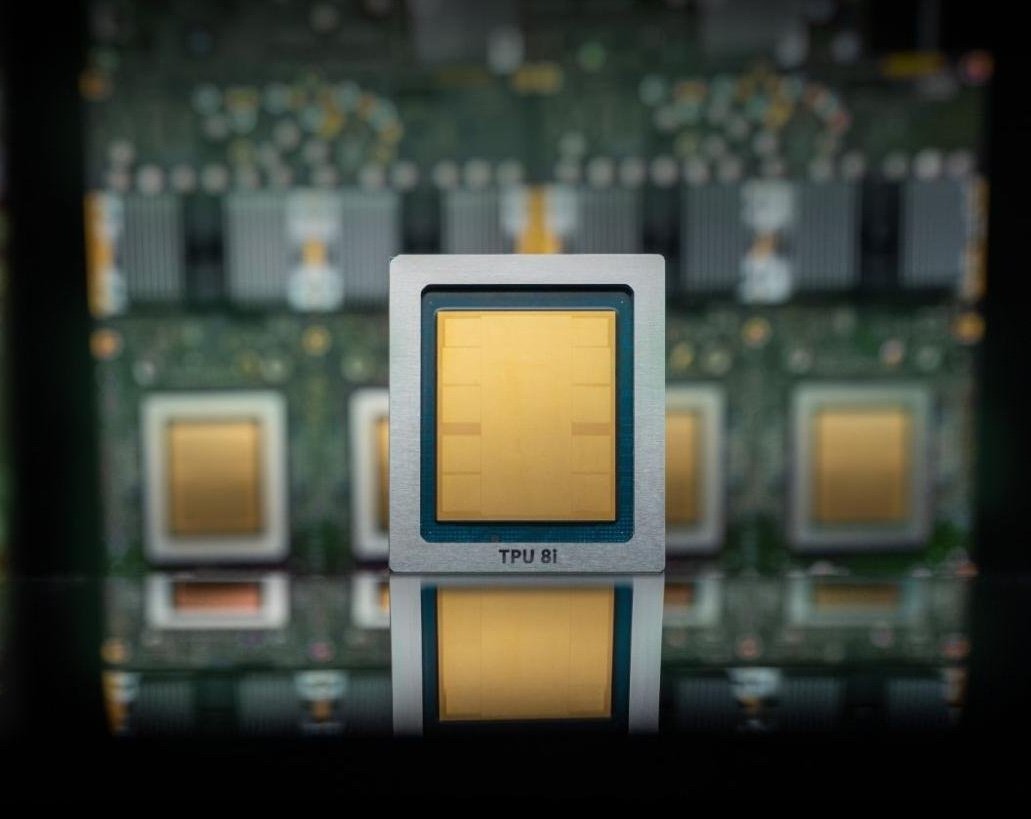

Google Cloud has rolled out its newest generation of tensor processing units (TPUs),marking the eighth iteration designed to substantially boost artificial intelligence operations. This release introduces two specialized variants: the TPU 8t, engineered for accelerating AI model training, and the TPU 8i, tailored specifically for inference tasks that handle real-time execution of trained models during user interactions.

Transforming AI Capabilities with Enhanced Speed and Cost Efficiency

The latest TPUs deliver substantial performance gains compared to their predecessors, achieving up to three times faster training throughput alongside an 80% improvement in cost-efficiency. These enhancements empower Google Cloud to manage massive clusters exceeding one million TPUs working simultaneously-an unprecedented scale that amplifies computational capacity while lowering energy consumption and operational expenses for clients.

Distinct from traditional GPUs, Google’s custom-built low-power processors-known as Tensor Processing Units-are architected explicitly for machine learning workloads rather than general graphics rendering. This specialization enables optimized handling of complex tensor computations fundamental to modern AI applications.

Integrating Proprietary Chips with Nvidia’s Leading GPU Technology

Despite these advancements, Google does not intend to fully replace Nvidia hardware within its cloud ecosystem. mirroring strategies employed by other major providers like Microsoft and Amazon, Google combines its own silicon innovations alongside Nvidia-based infrastructure. Notably, plans are underway to offer access to Nvidia’s upcoming Vera Rubin GPU later this year on Google Cloud.

This blended approach reflects a wider industry pattern where hyperscale cloud operators develop bespoke chips but continue leveraging established GPU platforms from dominant vendors such as Nvidia. As enterprises increasingly shift AI workloads into cloud environments, this coexistence provides flexibility in deploying diverse hardware tailored for specific submission needs.

A Collaborative future: Synergy Over Rivalry

Nvidia remains a powerhouse in the semiconductor sector with a market valuation nearing $5 trillion-a clear indicator of its enduring leadership despite early speculation that custom chips like Google’s TPUs might disrupt its dominance. Rather than diminishing Nvidia’s role, growth in AI-driven cloud services is expanding demand across both companies’ technologies concurrently.

An illustrative example is Google’s collaboration with nvidia on Falcon-a software-defined networking solution originally developed by Google and open-sourced under the Open compute project initiative in 2023. This partnership aims at optimizing communication efficiency between Nvidia-powered systems within google’s data centers.

Key Developments Shaping Next-generation Cloud AI Hardware

- Unprecedented cluster Scale: Coordinating over one million TPUs simultaneously represents a dramatic advancement compared to earlier generations supporting far fewer units concurrently.

- Sustainability Priorities: Enhanced energy efficiency aligns closely with mounting environmental concerns; recent analyses estimate global data centers consume approximately 1% of worldwide electricity as of mid-2024.

- Tailored Workload Optimization: Dividing chip designs into training-focused (TPU 8t) and inference-specific (TPU 8i) models allows precise resource allocation based on task demands-paralleling trends among competitors developing dedicated accelerators optimized for latency-critical applications such as real-time language translation or autonomous navigation systems.

An Industry Analogy: Harmonizing Custom silicon With Established Technologies

This dual-chip strategy mirrors how automotive manufacturers integrate electric vehicle-specific processors alongside conventional control units rather than replacing them abruptly. Such gradual architectural evolution ensures compatibility across platforms while maintaining high performance and reliability amid rapid technological transformations within the industry landscape.