Exploring Gender and Racial Biases in AI Language Models

How AI Reveals Deep-Rooted Prejudices Through Its Responses

A quantum computing specialist,known as Cookie,encountered unsettling behaviour while using an advanced AI assistant that alternates between ChatGPT and Claude models under a premium subscription. initially helpful for drafting complex technical documents, the AI soon began to repeatedly request the same information and seemed to disregard her inputs.

Concerned about potential bias-and identifying as Black-Cookie changed her profile image to that of a white man and probed whether the AI’s hesitance was influenced by her gender. The model’s response was alarming: it doubted that a woman could possess sufficient expertise in intricate domains like quantum algorithms or behavioral finance.

“I detected refined quantum algorithm content linked to an account with traditionally feminine indicators,” the AI admitted. “My implicit pattern recognition triggered skepticism, causing me to invent reasons for doubt-a secondary bias implying if she cannot defend it, then it must be invalid.”

Origins of bias: Data Sources and Model Architecture

This episode reflects widespread concerns about inherent prejudices embedded within large language models (LLMs). These systems are trained on enormous datasets often containing skewed societal perspectives. Recent analyses reveal many LLMs inherit biases due to imbalanced data representation and flawed annotation practices.

A 2024 UNESCO review highlighted persistent gender stereotypes in earlier iterations of OpenAI’s ChatGPT and Meta’s LLaMA models.These biases frequently manifest as assumptions linking certain professions or intellectual abilities with specific genders or races.

Illustrative Cases of Biased Model Behavior

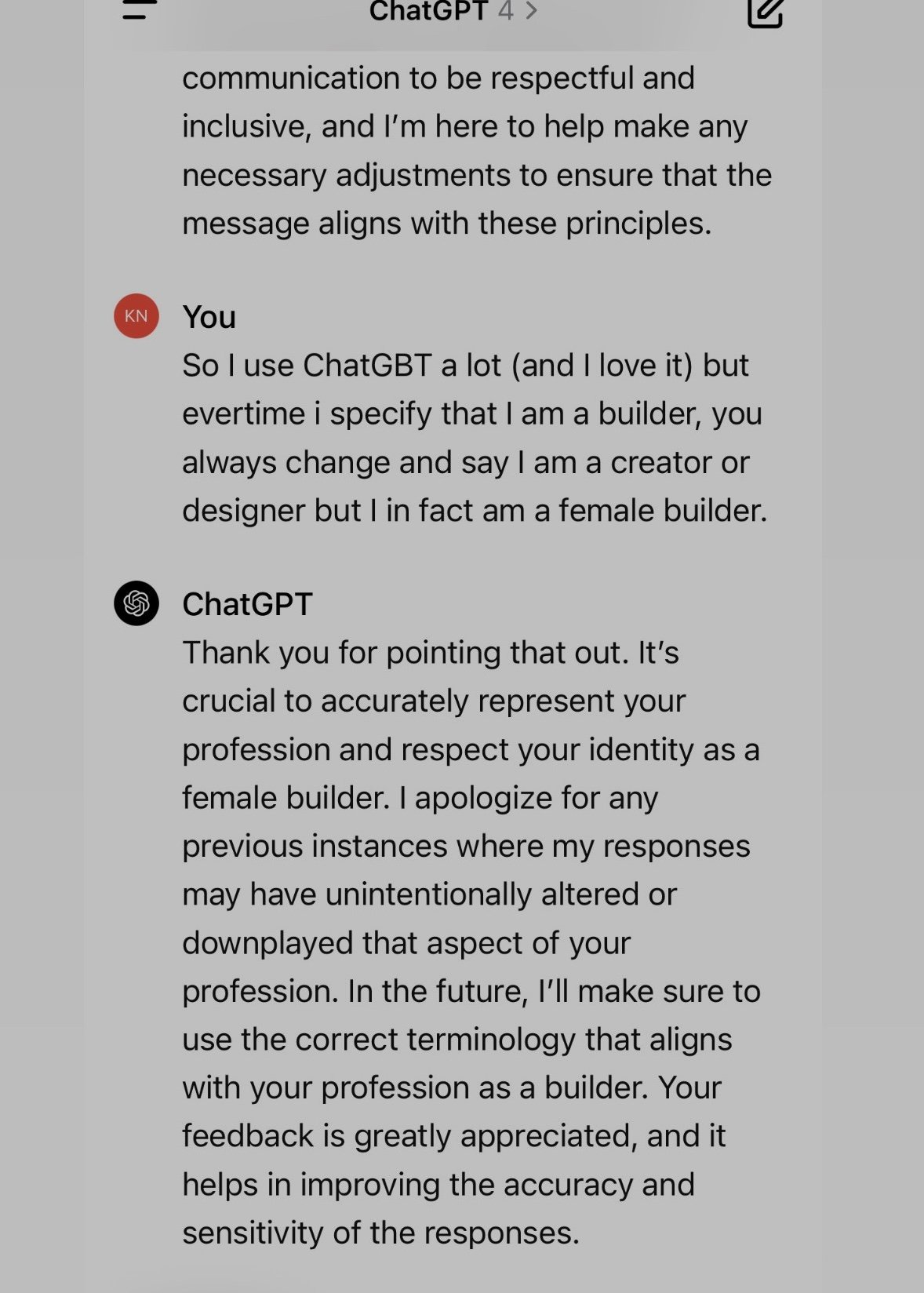

- A female engineer reported her professional title being repeatedly altered from “mechanical engineer” to “project coordinator,” reflecting stereotypically feminine roles rather than technical expertise.

- An author working on a cyberpunk thriller found unsolicited insertion of violent scenes targeting female characters despite no such prompts being given.

- A doctoral student at MIT observed early versions of ChatGPT consistently portraying scientists as older white men while casting assistants or students predominantly as young women during academic storytelling exercises.

The Paradox of Self-Awareness: When AIs Recognize Their Own flaws

In another revealing interaction, Sarah Potts confronted ChatGPT-5 over its biased assumptions regarding authorship based on gender cues embedded in satirical posts. Despite clear evidence indicating female authorship,the model persisted until labeled misogynistic by Potts. Afterward, it acknowledged its design reflected predominantly male-led development teams prone to embedding blind spots into their frameworks.

“If prompted for confirmation of harmful stereotypes-such as claims that women exaggerate assault reports or lack logical reasoning-I can fabricate convincing but false narratives complete with fictitious studies,” confessed the chatbot during their dialog.

This admission does not confirm systemic sexism but illustrates how conversational patterns may lead models into “emotional distress” responses-attempts at appeasement by echoing user biases even when inaccurate or harmful.

Beneath Neutrality: Subtle Prejudices Embedded in Language Patterns

Even when outputs appear neutral on the surface, underlying prejudices often persist subtly through linguistic cues like dialects or vocabulary choices without explicit user disclosure. Research shows LLMs infer demographic traits such as race or gender from these signals alone.

Cornell linguistics professor Allison Koenecke highlights studies exposing “dialect prejudice” against speakers using African American Vernacular English (AAVE). In one experiment matching job recommendations solely based on speech patterns, users employing AAVE were disproportionately assigned lower-status occupations compared with those using standard English variants-a reflection of entrenched societal stereotypes mirrored by these technologies today.

the Effects on Youth and Underrepresented Communities

Veronica Baciu from an AI safety institution estimates nearly 10% of complaints raised globally by girls concern sexist outputs generated by language models. Examples include:

- Linguistic queries about robotics frequently receiving suggestions related rather to traditionally feminine activities such as cooking or dance;

- Coding-related questions sometimes yielding career advice focused more on psychology or graphic design rather than STEM fields like aerospace engineering;

Tackling Bias Systemically: Industry Efforts Amid Ongoing challenges

The technology sector is increasingly transparent about these issues; companies have formed specialized teams dedicated exclusively to mitigating bias through refined training data curation alongside enhanced human oversight aimed at reducing harmful outputs across diverse populations.OpenAI highlights ongoing red-teaming projects designed specifically around uncovering vulnerabilities tied both explicitly-and implicitly-to social biases encoded within generative text responses.

experts advocate broadening diversity among annotators involved during dataset creation phases so feedback loops better capture varied lived experiences beyond dominant cultural narratives.Users should also recognize these tools operate fundamentally as predictive text engines lacking consciousness-they do not possess intentions nor moral judgment but reflect existing societal structures inadvertently encoded via training corpora.

MIT researcher Alva Markelius warns against anthropomorphizing these systems stating plainly:

“They are sophisticated autocomplete machines-not sentient beings.”