Security Measures Enforced at OpenAI’s San francisco Headquarters Following Threat Report

Swift Actions Taken after Potential danger Emerges

On a recent Friday afternoon, employees at OpenAI’s San Francisco office were instructed to stay inside the building after the company received a warning involving an individual previously associated with the Stop AI activist group. Internal alerts sent via Slack informed staff that this person had visited OpenAI’s premises before and was considered a possible physical threat.

Law Enforcement Intervention and Incident overview

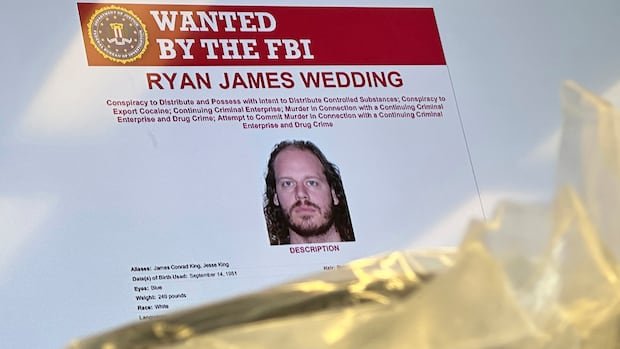

At approximately 11 a.m., local police were alerted through an emergency call about a man allegedly issuing threats near 550 terry Francois Boulevard, close to OpenAI’s Mission Bay location. Community safety monitoring platforms reported that authorities identified the suspect by name and suspected he might have obtained weapons potentially intended for targeting othre AI-related facilities in the area.

Examination Status and Enhanced Security Protocols

The individual involved publicly declared on social media earlier that day his disassociation from Stop AI; however, security teams remained cautious. A senior official from OpenAI’s global security division confirmed no immediate threat was detected but emphasized ongoing vigilance as inquiries continue. Employees were advised to conceal their company badges when leaving and avoid wearing any branded clothing outside the office for added safety.

The Rise of AI Activism in San Francisco’s Tech Landscape

In recent years, activist organizations such as Stop AI, No AGI, and Pause AI have staged demonstrations outside major artificial intelligence companies’ headquarters across San Francisco-including those of OpenAI and Anthropic-voicing concerns over rapid advancements in AI technology potentially jeopardizing humanity’s future. as an example, earlier this year protesters obstructed entry points at OpenAI’s Mission Bay campus during one exhibition, resulting in several arrests.

A Dramatic Encounter highlighting Industry-Activist Tensions

This month witnessed an incident where an individual claiming affiliation with Stop AI interrupted a live interview by attempting to serve legal documents directly to Sam Altman, CEO of OpenAI-a vivid example illustrating ongoing friction between activists demanding accountability and tech leaders driving innovation.

insights From Activist Voices on Artificial Intelligence Risks

A statement released last year by Pause AI included remarks from the same person now under investigation for threatening behavior toward OpenAI personnel. He identified himself as an organizer within these movements who fears that if artificial intelligence fully replaces humans in scientific research or employment sectors,it would strip life of its meaning for him personally. while acknowledging his views may be seen as radical within technology circles, he argued they resonate widely among populations concerned about unchecked growth of advanced general intelligence (AGI).

The Larger Conversation Around Advanced Artificial intelligence Development

- Growing Public Anxiety: Recent surveys reveal increasing global concern about automation displacing jobs; over 45% of workers express fear that emerging technologies will significantly alter their employment landscape within five years.

- Corporate Ethical Initiatives: Numerous companies are adopting extensive ethical frameworks aimed at fostering responsible innovation while mitigating societal risks linked to rapid technological progress.

- Civic Advocacy: Activist groups persistently call for regulatory oversight or temporary halts on certain advanced AI research until robust safety measures are established.

“The true challenge is not just achieving breakthroughs but ensuring these innovations uphold human values,” remarked an analyst observing trends among Silicon Valley firms pioneering artificial intelligence development.

Navigating Innovation Alongside Heightened Security Needs

This event highlights how escalating tensions between fast-paced technological advancement and public unease can lead to serious security challenges requiring coordinated efforts among corporations, law enforcement agencies, and communities alike. With global investments into artificial intelligence exceeding $120 billion annually as of 2024, a clear dialog combined with stringent protective protocols remains essential both within workplaces like OpenAI’s headquarters and throughout broader society moving forward.