Breakthroughs in Google’s Gemini AI for Android Devices

Streamlined Multitasking with Intelligent Automation

Google has introduced a powerful set of Gemini Intelligence-driven AI features aimed at enhancing multitasking capabilities on Android smartphones. These improvements allow the assistant to handle intricate, multi-step processes-such as moving a grocery list from your notes app straight into the shopping cart of another application. Users can trigger these actions by simply holding down the power button and verbally describing their request, while Gemini leverages the current screen context to understand intent. Crucially, it always seeks user approval before completing any transactions or final steps.

Advanced Browsing assistance and Interactive Content Summaries

Expanding upon previous experimental tools that enabled autonomous web navigation and scheduling, Google is now bringing these functionalities directly into Chrome on Android devices. By mid-2024, users will benefit from Gemini’s ability to generate concise summaries of webpage content or answer questions related to what they’re viewing-mirroring desktop-level AI assistance. This feature aims to combat details fatigue by delivering clear insights seamlessly within the browsing experience.

Effortless Form Filling Powered by personal Intelligence

A noteworthy enhancement involves gemini’s smart form autofill capability using data collected through Google’s opt-in personal Intelligence system.This streamlines form completion without compromising privacy,as users retain full control via simple toggles that enable or disable this feature anytime.

Revolutionizing Voice Input with Rambler Dictation

The Gboard keyboard now integrates Gemini’s multimodal intelligence through an innovative dictation tool called Rambler. This technology captures speech naturally and transcribes it with high accuracy while intelligently filtering out filler words like “um” and “uh.” The result is polished text output requiring minimal manual correction-a important leap beyond customary voice-to-text solutions.

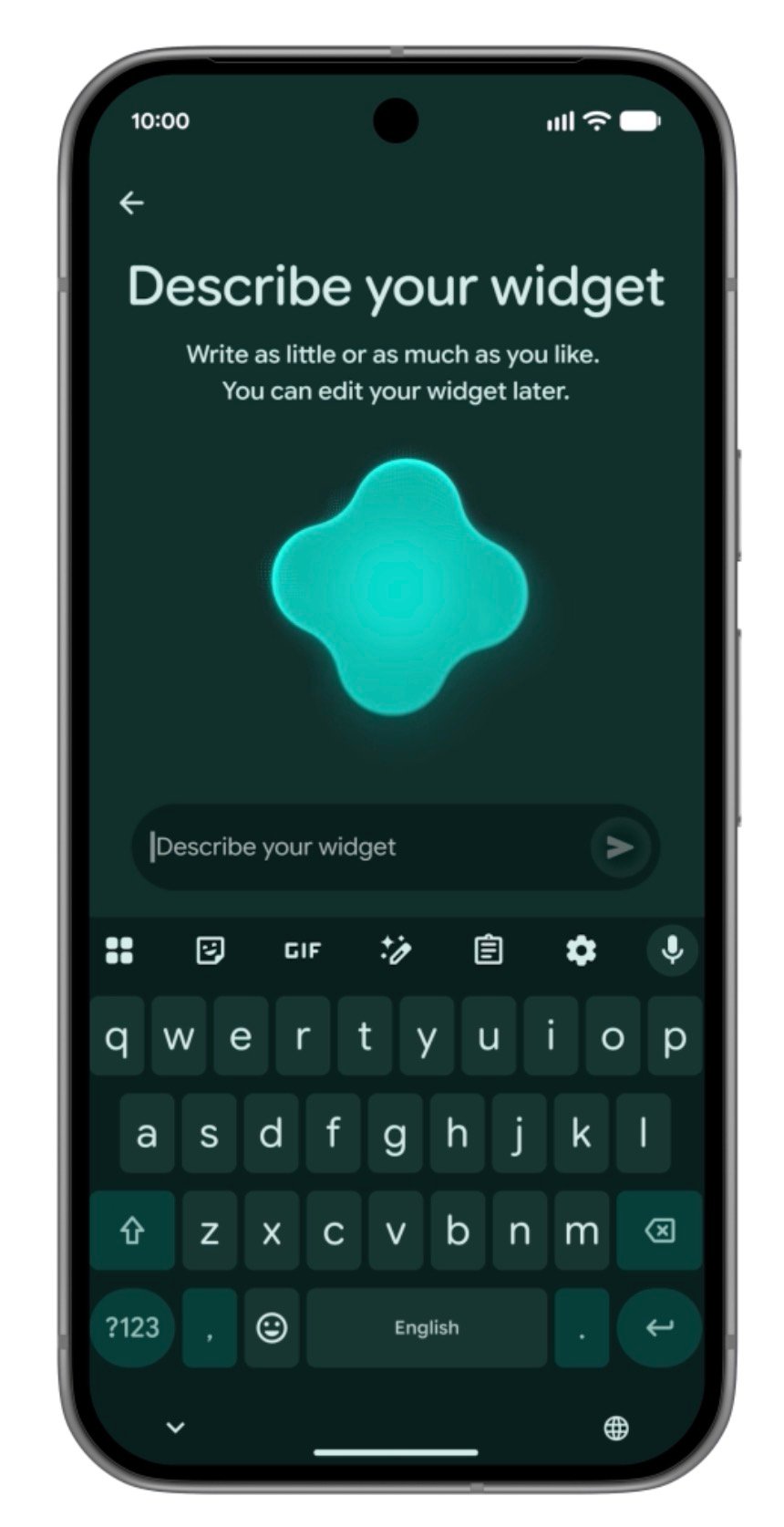

Create Personalized Widgets Using Everyday Language

Tapping into the emerging trend of vibe-coding-where software elements are generated via conversational commands-Google offers Android users a novel way to craft custom widgets simply by describing their preferences in natural language. As a notable example, you might ask for a widget that recommends “three quick vegan dinner ideas each week,” which Gemini then assembles automatically.

This innovation aligns with similar efforts across tech industries; such as, last year startup NovaTech launched an AI-powered mini-app creator enabling users to build practical tools through simple prompts.

User Interface Consistency Rooted in Material 3 Design Standards

The entire collection of new features follows Google’s Material 3 design guidelines closely, ensuring uniform visual appeal and intuitive usability across all compatible devices receiving these updates.

Rollout Schedule Across devices

- The newest Samsung Galaxy series alongside Google Pixel phones will be among the first models equipped with these advanced Gemini capabilities starting summer 2024.

- A wider distribution targeting additional Android handsets is planned throughout the rest of 2024 to broaden access substantially.

“By embedding sophisticated AI directly into everyday mobile tasks-from managing shopping lists to refining voice dictation-Gemini is transforming how we engage with our smartphones.”