introducing project Genie: DeepMind’s Revolutionary AI-powered Game Environment Generator

DeepMind, a leader in artificial intelligence research under Google, has launched exclusive access to Project Genie, a groundbreaking AI system that crafts immersive virtual game worlds from simple textual descriptions or images. Currently available only to U.S.-based Google AI Ultra subscribers,this experimental platform offers an early glimpse into the future of interactive digital landscapes.

The Core Innovations driving Project Genie

This platform integrates several state-of-the-art technologies: the elegant world simulation model Genie 3,the advanced image generation engine Nano Banana Pro,and DeepMind’s versatile Gemini framework. Together, these components convert user inputs into rich, explorable environments that respond dynamically to player interaction.

Genie 3 functions as an internal environment simulator capable of predicting outcomes and enabling strategic planning within generated worlds. Such world models are widely regarded by AI researchers as essential milestones on the path toward artificial general intelligence (AGI). While AGI remains a distant objective, current applications focus heavily on entertainment-particularly video games-and robotics training through realistic simulations.

A Growing Race in World Model Development

The unveiling of Project Genie intensifies competition among top-tier organizations advancing world modeling technology. For instance, World Labs recently debuted its commercial product “Marble,” emphasizing rapid iteration cycles for creative content generation. Similarly, Runway has introduced a world model featuring integrated audio synthesis tailored for video creation workflows. Meanwhile, AMI Labs-founded by former Meta chief scientist Yann LeCun-is pushing boundaries with novel approaches to immersive environment construction.

User Insights Fuel Continuous Refinement

A key goal behind releasing Project Genie is gathering extensive user feedback and interaction data to accelerate system improvements. According to DeepMind research leadership, expanding access helps capture diverse usage patterns while acknowledging that this prototype remains experimental and subject to ongoing enhancements.

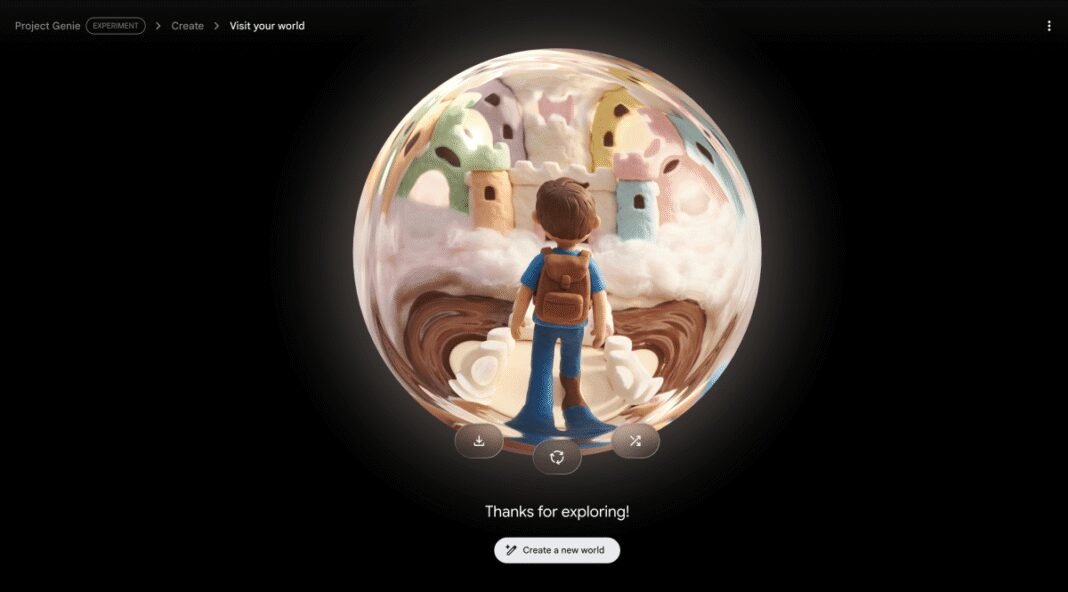

Crafting Virtual Worlds: How Users Engage with Project Genie

The creative journey begins when users submit detailed text prompts describing both an environment and a protagonist they wish to control from first- or third-person viewpoints. The Nano banana Pro engine then produces an initial visual representation based on these inputs; users can make limited adjustments before launching full-scale interactive world generation via Project Genie.

an option method allows uploading real-world photos as templates for virtual space creation-though output quality varies depending on image complexity and detail level provided by users.

- Create: Input imaginative descriptions or photographic references outlining settings and characters.

- Edit: Fine-tune generated images prior to initiating complete environment assembly.

- Explore: Navigate your custom-built realm using keyboard controls during sessions currently limited to 60 seconds due to computational constraints.

- remix & Share: Modify existing creations or browse curated galleries for inspiration; export videos capturing your explorations within these worlds.

The Impact of Computational Limits on User Experience

The autoregressive design of Genie 3, which predicts each subsequent element based on previous outputs, demands significant processing power per session. This necessitates restricting individual exploration times primarily due to hardware resource allocation costs during active use periods. Limiting session length ensures broader accessibility without sacrificing testing quality since longer interactions currently yield diminishing returns given present system capabilities.

An Artistic Approach That Prioritizes Imagination Over Photorealism

User experiences highlight how styles inspired by impressionist paintings or graphic novels translate effectively into captivating virtual realms using this technology-imbuing creations with charm reminiscent of handcrafted animation rather than striving for hyper-realistic visuals typical in AAA titles today.This approach evokes childhood wonder much like storybook illustrations do-a tester described their dreamscape featuring glowing jellyfish forests brought vividly alive within Genie’s output.*

“The softly glowing flora felt almost tangible-as if stepping inside a living watercolor painting,” shared one early participant reflecting on their journey through these imaginative spaces.*

Safeguarding Content Integrity Through robust Filters

Tight content moderation protocols prevent generating inappropriate material such as nudity or unauthorized copyrighted characters-including popular franchises-to avoid legal complications encountered previously with generative systems.* Attempts at creating mythical kingdoms resembling known IP were systematically blocked.*

Navigational Challenges & Interaction Limitations Highlight Areas Needing Improvement

The prototype sometiems struggles replicating complex real-world interiors accurately-for example recreating office spaces often results in sparse layouts lacking natural detail despite recognizable furniture shapes present.* Additionally,* animated avatars occasionally pass through solid objects due partly*to rudimentary physics simulation presently implemented.*

- User movement depends mainly on conventional gaming keys (W-A-S-D plus arrow keys),which proved challenging for non-gamers during trials;

- Motions sometimes appeared jerky akin to steering difficulties experienced when maneuvering carts with faulty wheels;

- Sporadic inconsistencies emerged when revisiting previously generated zones-though overall stability benefits from memory retention embedded within autoregressive architecture;

- Lack of fluidity limits extended engagement but offers promising insights into potential future versions*

Paving the Way Forward: Enhancing Immersion & Player Agency

“Project genie serves primarily as a proof-of-concept rather than polished consumer software,” explained project leads.* Future development aims include boosting environmental responsiveness alongside richer control options empowering users over both avatars and surroundings alike.* As computational efficiency improves,* session durations may increase beyond current caps allowing deeper exploration possibilities ahead*