Bluesky’s 2025 Openness Report Highlights Robust Growth and Strengthened Trust & Safety Protocols

Emerging as a notable choice to platforms like X and Threads, Bluesky released its first-ever transparency report in 2025. The document sheds light on the platform’s Trust & Safety team efforts, including advancements in age verification compliance, monitoring of influence operations, and the implementation of automated content labeling systems.

Explosive User Growth Drives Platform Engagement

In 2025, Bluesky witnessed an remarkable surge in its user base, expanding by nearly 60% from approximately 25.9 million to over 41 million accounts.This total encompasses both users on Bluesky’s proprietary infrastructure and those operating independently within the decentralized network powered by the AT protocol.

This rapid expansion fueled unprecedented activity levels: users created around 1.41 billion posts, representing more than 60% of all content ever published on the platform. Of these contributions, roughly 235 million posts included media elements, such as images or videos-accounting for nearly two-thirds of all media historically shared on Bluesky.

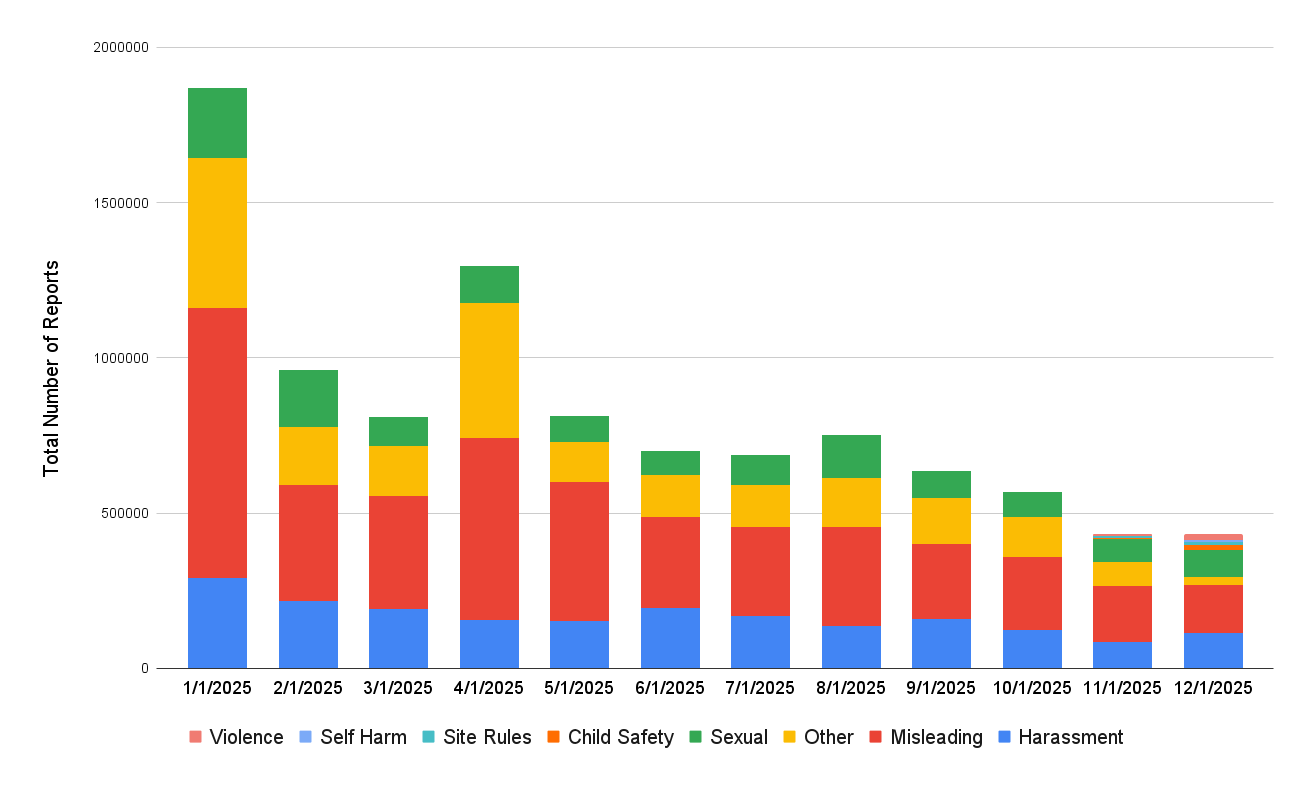

User-Driven Moderation Reports See Importent Uptick

the rise in membership was accompanied by a sharp increase in moderation reports submitted by community members-a jump of about 54%, climbing from roughly 6.48 million reports in 2024 to close to 10 million reports last year. This growth closely parallels overall platform expansion during that timeframe.

An estimated three percent of active users (approximately 1.24 million individuals) filed at least one report throughout the year. The most commonly flagged issues were misleading content-including spam-wich made up nearly 44% of reports; harassment at just under 20%; and concerns related to sexual content comprising about thirteen and a half percent.

- Misleading Content: Over four million reports fell into this category wiht spam alone accounting for approximately two and a half million cases.

- Harassment: Hate speech dominated hear with around fifty-five thousand complaints; other significant subcategories included targeted harassment (~42,500),trolling (~29,500),and doxxing (~3,200).

- Sexual Content: Most issues involved improper labeling where adult material lacked appropriate metadata tags enabling user filtering controls; smaller numbers addressed nonconsensual intimate imagery (~7,500), abusive content (~6,100), and deepfake-related violations (>2,000).

A miscellaneous “other” category accounted for just over twenty-two percent of reports that did not fit neatly into these classifications or smaller groups such as violence or child safety concerns.

Tackling Toxic Interactions Through Advanced Algorithms

A notable achievement was a dramatic reduction-79%-in daily moderation reports related to antisocial behaviour following deployment of an AI-driven algorithm designed to detect toxic replies automatically hiding them behind an additional click interface similar to features used by X (formerly Twitter). Furthermore, monthly moderation report rates per thousand active users dropped by more than half between January and December.

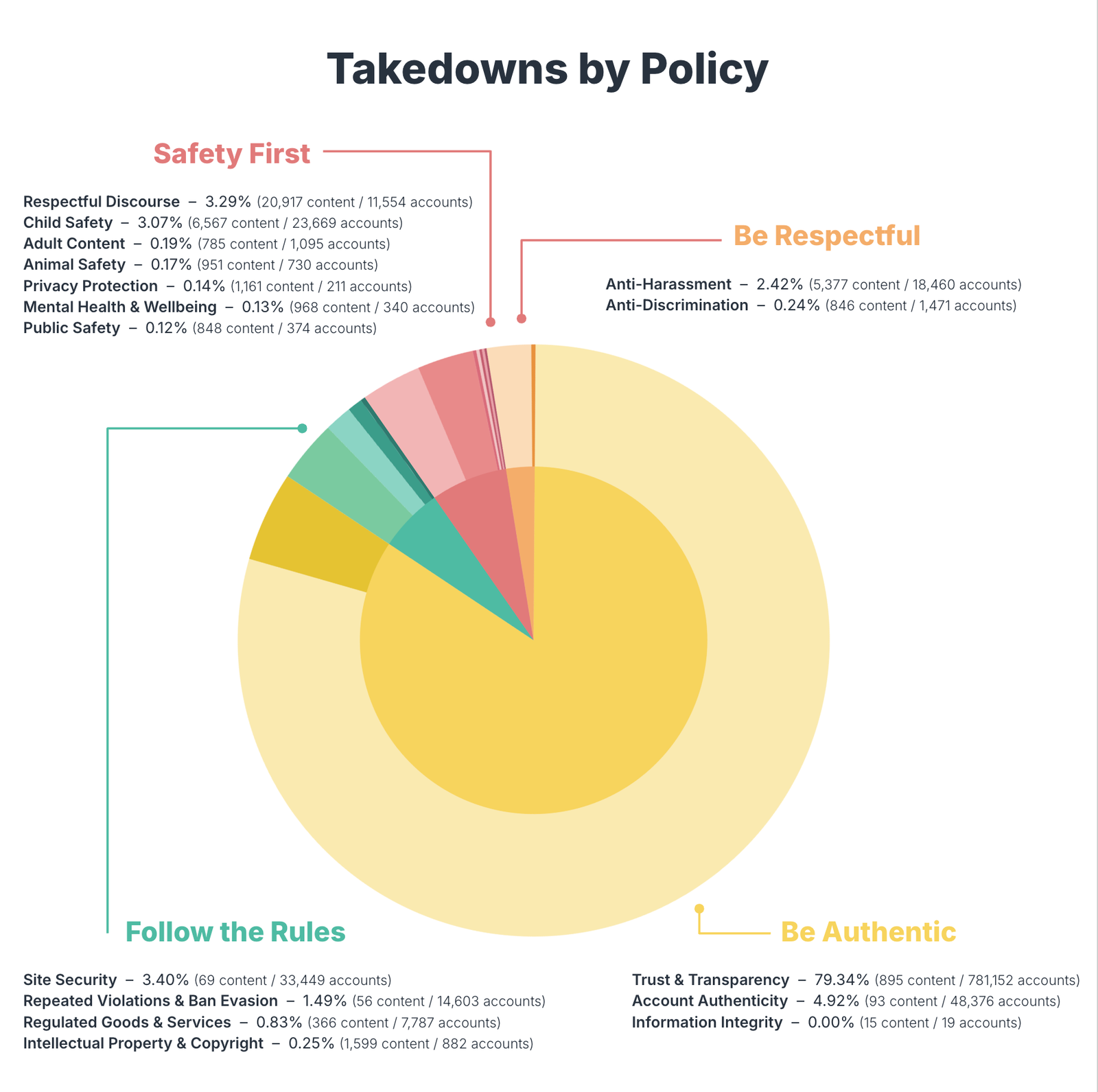

Dramatic Increase in Content Removal Amid Heightened legal Pressure

The company reported ample growth in enforcement actions last year: it removed approximately 2.44 million pieces of content or accounts. This marks a significant rise compared with previous years when only tens of thousands were taken down annually through manual reviews combined with automated systems.

- Around

- The automated tools deactivated close to

- A combined total exceeding six thousand records were removed following moderator intervention;

- An additional few hundred items were eliminated automatically due to policy breaches;

- The platform issued over three thousand temporary suspensions alongside nearly fifteen thousand permanent bans targeting ban evasion tactics primarily linked to spam networks impersonation schemes or coordinated disinformation campaigns.

A Strategic Shift Toward Labeling Rather Than Deletion

The data reveals that although account removals more than doubled compared with prior periods (rising from just over one million previously), Bluesky predominantly favored applying labels instead of outright deletions whenever feasible-implementing roughly

This nuanced approach aligns well with contemporary social platforms’ preference for sophisticated content management strategies rather than blunt censorship techniques.

Navigating Emerging Challenges: Legal Requests Surge Fivefold While Influence Operations Are Countered

The volume of legal requests received from authorities-including law enforcement agencies regulatory bodies-and legal representatives soared dramatically last year reaching almost fifteen hundred inquiries versus fewer than two hundred forty during the previous calendar cycle.

This sharp escalation reflects intensifying scrutiny faced globally across social networks amid growing demands for accountability concerning harmful online activities.

additionally,bluessky identified suspicious influence operations primarily originating from foreign actors likely based outside Russia leading them to remove upwards of three-and-a-half-thousand suspect accounts aimed at manipulating discourse within their ecosystem.

This comprehensive transparency initiative represents Bluesky’s first detailed public disclosure extending beyond standard moderation summaries covering broader areas such as regulatory compliance account verification procedures alongside ongoing trust-building measures tailored specifically for decentralized social networking environments.